Hey friends,

I’ve noticed something about this new AI wave: the winners aren’t the ones who ship the fastest anymore — they’re the ones who survive the longest.

The first wave of AI was all about speed.

Whoever shipped faster, won the headlines.

But now we’re entering a second wave — one that’s quieter, harder, and infinitely more important: sustainability.

I’ve been watching this shift happen up close. Teams that sprinted through last year’s “AI gold rush” are now running into a wall — technical debt, compliance nightmares, unreliable models, and user trust that never quite sticks.

The question isn’t “Can you build AI?” anymore.

It’s “Can you build an AI that lasts?”

In today’s edition, we’ll explore what it takes to scale AI beyond the prototype phase — and build systems that endure.

We’ll unpack:

Why fast-built models collapse under real-world pressure — and how companies like Canva and Netflix refactored to scale.

How governance went from a compliance burden to a competitive advantage.

What separates AI tools users try once from those they trust forever.

The five stages of scaling AI.

And finally — a hands-on playbook for leaders who want to go from pilot to platform without losing momentum.

If you’re building or leading an AI initiative, this one’s for you — because scaling AI isn’t about adding features.

It’s about building foundations that can outlive the hype.

PwC’s 2024 Workforce Radar Report

IN PARTNERSHIP WITH SUPERHUMAN AI

The Gold standard for AI news

AI keeps coming up at work, but you still don't get it?

That's exactly why 1M+ professionals working at Google, Meta, and OpenAI read Superhuman AI daily.

Here's what you get:

Daily AI news that matters for your career - Filtered from 1000s of sources so you know what affects your industry.

Step-by-step tutorials you can use immediately - Real prompts and workflows that solve actual business problems.

New AI tools tested and reviewed - We try everything to deliver tools that drive real results.

All in just 3 minutes a day

⚙️ Scalability — Refactor or Die

Speed comes first. Scale tests your soul.

When emergence of gen AI exploded into mainstream use, the first instinct was to build fast. Everyone wanted an MVP. Teams glued APIs together, stacked prompt chains, and demoed prototypes overnight. It worked — until it didn’t.

Souce: Mckinsey

The hard truth: Most of those fast builds can’t scale.

Take Canva, for example. When they launched Magic Write, it was powered by a single GPT-3 integration. It worked beautifully — until millions of users started hitting it at once. The team quickly realized that scaling a single-model system was like trying to build a skyscraper on sand.

So, they refactored. Instead of one model, Canva built an AI layer — a shared foundation that connects multiple models (OpenAI, Anthropic, and in-house ones) with shared pipelines and caching systems. That shift didn’t just improve speed. It made their AI maintainable.

Netflix had a similar moment with its recommendation system. What began as a hand-tuned algorithm evolved into a continuously retrained AI ecosystem. Every day, models learn from 260M user interactions in real time — predicting preferences, updating weights, and adapting content in milliseconds. That’s MLOps at its finest: pipelines, monitoring, and retraining all baked into the product lifecycle.

And this is where IBM Think hits the nail on the head:

Scaling AI isn’t just deploying one or two models — it’s operationalizing how AI evolves inside your business. You need infrastructure, data lineage, and retraining loops that don’t break under pressure.

McKinsey (2024) found that 62% of AI projects fail not because of bad models — but because of weak infrastructure.

So, if your prototype works today but your pipeline can’t handle a million users tomorrow, it’s not innovation — it’s a ticking time bomb.

What works:

Refactor early.

Build modular architectures.

Treat AI systems like living organisms — retraining, adapting, and evolving constantly.

The takeaway: You don’t scale prompts. You scale systems.

Governance — Turning Compliance into a Competitive Edge

Fast companies die by chaos. Great ones scale through governance.

The first generation of AI builders treated governance like an afterthought — something to handle after the launch. But now, as the EU AI Act, GDPR, and dozens of global frameworks tighten the rules, companies are realizing: governance is growth infrastructure.

JPMorgan Chase is a textbook example. When it began scaling AI for credit risk, it didn’t wait for regulators to come knocking. The bank built an internal AI Fairness Dashboard to detect bias before deployment. Every model now goes through a fairness audit, not because compliance demanded it — but because trust became a business advantage.

Then there’s IBM WatsonX — arguably one of the biggest AI rebrands of the last two years. Watson’s first iteration in 2011 overpromised and underdelivered. But WatsonX rebuilt trust by doing something simple but revolutionary: baking governance into the product. Every model comes with lineage tracking, transparency reports, and explainability by default. Governance became a feature.

Salesforce followed a similar playbook with Einstein GPT. They didn’t just talk about accuracy — they talked about “responsible scaling.” Enterprises loved it because it de-risked adoption.

The takeaway?

Governance isn’t red tape. It’s brand equity.

IBM Think’s 2025 research says it best: scaling AI means scaling trust.

And that means:

Building open, hybrid cloud architectures for traceability.

Integrating compliance into MLOps workflows.

Making explainability visible to both engineers and executives.

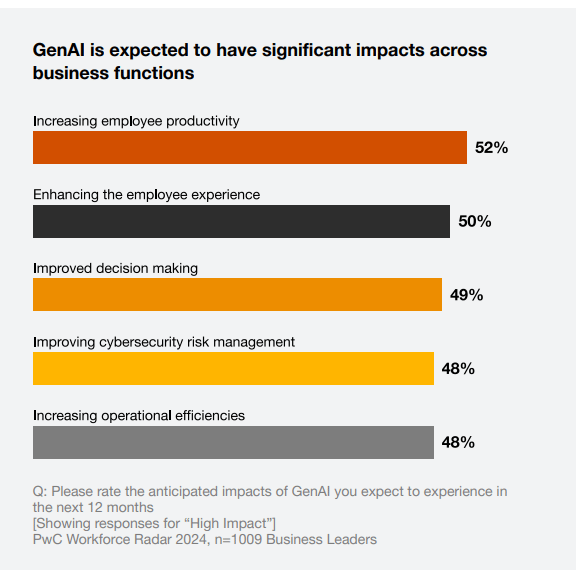

📈 PwC (2025) found that companies with built-in governance frameworks scale AI 3x faster than those that bolt it on later.

Governance isn’t the enemy of speed. It’s the reason speed is sustainable.

Adoption — Building Trust That Scales

You can have the smartest AI in the world — but if users don’t trust it, it’s dead on arrival.

The hardest part of scaling AI isn’t building the model. It’s building relationships.

Grammarly is one of my favorite case studies here. Instead of hiding the algorithm behind the scenes, Grammarly gives users full control: you can reject, modify, or accept every suggestion. That sense of agency builds psychological trust. People use Grammarly because they trust themselves when they use it.

Duolingo nails the same principle. Its AI tutor doesn’t just correct you — it tells you why. That transparency makes learning feel collaborative, not punitive. The model becomes a partner, not a judge.

And then there’s DeepMind’s AlphaFold. When it achieved groundbreaking accuracy in protein prediction, DeepMind could’ve kept it private. Instead, it open-sourced the data. That move built instant credibility with scientists and positioned DeepMind as a trusted force for good — not just profit.

These stories all share the same DNA:

Trust scales faster than code.

Stanford’s 2024 Human-AI Trust Study found that users are 41% more likely to adopt AI when they understand its reasoning.

So here’s the real playbook:

Design for transparency — show “why” the model does what it does.

Give users control — the ability to override or adjust outputs.

Admit uncertainty — honesty is the new authority.

Trust isn’t built by perfection. It’s built by participation.

The Five Stages of Scaling AI

From Speed to Sustainability

Most organizations rush straight to “execution.”

But sustainable AI doesn’t come from speed — it comes from sequence.

After studying hundreds of implementations across industries, a clear five-stage path emerges — from the first model to a fully AI-powered organization.

Let’s break it down..

1️⃣ Starting with Strategy — Build a Game Plan That Matters

The biggest mistake teams make? Starting with tools instead of problems.

A strong AI strategy starts with intent, not infrastructure. It’s about asking:

→ What pain point are we solving?

→ How does this tie to our business objectives?

→ What does success look like in numbers?

Example:

A global retailer was struggling with customer service delays. Instead of “adding a chatbot,” their AI strategy focused on reducing support resolution time by 40%.

That simple shift reframed the project from “AI for automation” to “AI for customer satisfaction.”

The result? They automated repetitive queries, retrained agents for high-value tasks, and lifted CSAT scores by 23%.

Lesson:

Secure executive sponsorship early.

Align KPIs.

Define your scope clearly.

The best AI projects don’t start in IT — they start in the boardroom.

2️⃣ Data & Tech Readiness — Laying the Groundwork

Once the strategy is set, your next bottleneck will always be data.

AI is only as good as the information it learns from. That means cleaning, structuring, and connecting data sources before you even think about fine-tuning a model.

Example:

A global manufacturer integrated sensor data from its production lines into a hybrid cloud system. Using an MLOps pipeline, they built predictive maintenance models that reduced downtime by 30% — saving $2.4M annually.

The hidden truth? They didn’t start with the model. They started with the data architecture.

What matters:

Audit your data landscape: where it lives, who owns it, how it flows.

Improve data quality and governance before scaling models.

Choose scalable infrastructure — hybrid or cloud-native, depending on sensitivity.

As one AI leader put it: “You can’t scale AI if your data doesn’t scale with it.”

3️⃣ Executing with Agility — From Concept to Reality

Execution is where most AI projects die — not because of poor technology, but because teams over-plan and under-test.

AI isn’t linear. It’s experimental. The winning formula is agile + cross-functional.

Example:

A mid-sized retailer wanted to use AI for demand forecasting.

Instead of building a 6-month solution, they launched a 3-week proof of concept for one region. It tested real data and proved a 12% accuracy gain.

Encouraged, they expanded region by region — iterating after each sprint. Within six months, inventory costs dropped by 17%.

Why it worked:

They built, tested, refined, and learned — instead of “launching and hoping.”

Takeaway:

Start small.

Iterate fast.

Validate every improvement with data, not opinion.

Your first AI win isn’t your biggest — it’s your foundation.

4️⃣ Deploying for Adoption — Making AI Invisible

The moment AI goes live, it stops being a technical challenge and becomes a human one.

Users don’t resist AI because they hate innovation — they resist it because it disrupts routine.

Example:

A financial institution deploying an AI fraud detection system faced heavy skepticism. Instead of replacing processes, they integrated AI into existing dashboards.

Agents could see how the model scored risk, override it, and send feedback to retrain it.

That feedback loop built comfort, confidence, and accountability. Within months, false positives dropped by 28%.

AI adoption requires empathy as much as engineering.

Train users early.

Communicate value clearly.

Celebrate small wins publicly.

A phased rollout + champion users in each department = smoother transitions and higher trust.

5️⃣ Scaling to an AI-Powered Organization — The Long Game

Deploying your first AI model is a milestone. Scaling it across the company is transformation.

Example:

Microsoft’s Responsible AI Center of Excellence offers a masterclass here. They built a dedicated team that oversees every AI system — from bias reviews to explainability checks — ensuring consistency across hundreds of products.

That structure allowed them to innovate fast and stay compliant globally.

Scaling AI is less about adding models and more about building systems of learning.

The goal? To make AI a muscle, not a project.

How leading organizations do it:

Create AI Centers of Excellence for governance and shared learning.

Build culture through transparency — showcase internal wins.

Continuously retrain and refresh models as data evolves.

In short:

You don’t scale AI by doing more.

You scale it by doing it better

The AI Scaling Playbook — How to Go from Pilot to Platform

Alright, let’s get tactical.

If you’re leading or advising an AI initiative, here’s the playbook that separates scalable systems from one-off demos.

1. People: Build the Right Team DNA

Scaling AI isn’t a solo act. It’s cross-functional by design.

PwC’s 2024 Workforce Radar Report

Pair domain experts with data scientists early — the blend of human intuition and technical depth drives accuracy and adoption.

Appoint an executive sponsor (CIO, COO, or CPO) who owns business outcomes, not just “AI innovation.”

Build an AI Center of Excellence (CoE) to maintain best practices and governance templates.

IBM Think (2025) found that organizations with AI CoEs operationalize models 45% faster.

2. Process: Create Scalable Systems, Not Experiments

Your process defines your product.

Use agile, sprint-based workflows for AI — short iterations, frequent feedback, continuous retraining.

Implement MLOps pipelines for monitoring, logging, and rollback. Treat every model like software with version control.

Embed governance directly into these workflows. Every model release should have fairness, compliance, and performance reports automatically generated.

Example: Canva now ships AI features through an internal ModelOps pipeline that automatically flags bias drift and latency issues before release.

3. Platform: Choose Infrastructure That Scales

AI scaling fails when tech stacks can’t keep up.

Move toward a hybrid cloud setup — flexible, secure, and globally accessible.

Centralize data in feature stores and lakehouses with standardized schemas.

Use open APIs and modular integrations so you can swap providers without re-architecting.

Netflix’s modular recommender pipeline allows new models to plug in or roll back seamlessly — critical when scaling globally.

4. Trust: Operationalize Transparency

Make trust measurable.

Design user interfaces that explain AI reasoning (“why we made this decision”).

Give users override control. Let them correct the AI — and feed those corrections back into training loops.

Publish model confidence scores. Honesty builds authority.

Grammarly’s “confidence meter” and explainability pop-ups turn model uncertainty into a strength.

5. Scale: Institutionalize Learning

Scaling AI isn’t about adding features — it’s about multiplying learning velocity.

Capture every lesson learned in shared playbooks or internal wikis.

Run quarterly “AI retrospectives” across departments.

Treat every AI deployment as a case study to refine the next one.

Microsoft’s Responsible AI CoE is a gold standard here — every project feeds insights back into the corporate knowledge base.

Final Takeaways — How to Build AI That Lasts

After watching hundreds of companies experiment, fail, and finally succeed with AI, the pattern is unmistakable:

Speed builds headlines.

Governance builds trust.

Trust builds scale.

The first generation of AI winners were fast.

The next generation will be sustainable.

If you’re leading an AI initiative today, here’s what matters most:

Refactor before it’s too late. Your MVP won’t scale without architecture.

Bake governance into your workflow, not your policy deck.

Design for user trust — show, don’t tell.

Build internal alignment — every great AI project has a human champion.

Treat scaling as an enterprise transformation, not a technical upgrade.

AI isn’t just about automation — it’s about creating measurable, scalable solutions that transform how the entire business operates.

And that’s where this next wave is heading — from fast demos to long-term design, from hype to habit, from MVPs to ecosystems that evolve with every user interaction.

Bottom line

The more I study AI transformations, the more I realize — the real barrier isn’t technology. It's the mindset.

Most teams don’t fail because their models underperform; they fail because they never reimagine how decisions get made once AI enters the room.

We treat AI like a tool, but it’s actually a mirror. It reflects how open we are to change — how willing we are to question habits that once felt unshakable.

And that’s uncomfortable. Because transformation isn’t about plugging in a model; it’s about unlearning control.

When you let AI take over a process, you also reveal the gaps in your culture — your trust systems, your data discipline, your leadership clarity.

That’s where true evolution begins: not when the pilot succeeds, but when people start behaving differently because of it.

So maybe the real measure of AI maturity isn’t accuracy — it’s adaptability.

And maybe the companies that last aren’t those who experiment fastest, but those who learn the deepest.

See you next time,

— Naseema

SHARE THE NEWSLETTER & GET REWARDS

Your referral count: {{ rp_num_referrals }}

Or copy & paste your referral link to others: {{ rp_refer_url }}

That’s all for now. And, thanks for staying with us. If you have specific feedback, please let us know by leaving a comment or emailing us. We are here to serve you!

Join 130k+ AI and Data enthusiasts by subscribing to our LinkedIn page.

Become a sponsor of our next newsletter and connect with industry leaders and innovators.