Hey friends, happy Monday.

Over the past year, a lot of people have tried to “use AI more.” They write prompts. They generate content. They experiment with tools.

But if you look closely, most of that usage stays shallow.

It improves output slightly.

It saves some time.

It feels productive.

But it doesn’t fundamentally change how they think or operate. At the same time, there’s a smaller group of people using AI very differently. They are not just using ChatGPT. They are building systems around it. They treat it less like a tool and more like a structured extension of their thinking.

And the difference shows up quickly.

They make decisions faster.

They structure ideas more clearly.

They execute with less friction.

This is where the real leverage is.

Not in using AI occasionally.

But in designing a personal AI agent that consistently improves how you think and how you work.

Today’s edition breaks down how to do that.

We’ll cover:

What a personal AI agent actually is (and isn’t)

Why most people fail to get real leverage from AI

A simple architecture for building your own agent

The core workflows it should support

A practical build plan you can follow this week

And how this compounds over time

Let’s start with the shift.

— Naseema Perveen

JOIN SMART NEWS BY TINY MEDIA

We’ve released a smart news platform that scores articles, research, and opinions in real time with relevance to your interests. You can get an overview, score rating, and a link to the full story with your interests and preferences at the centre of what you see.

Stop searching endless articles to find what you need. Let our smart news deliver to you automatically the stories you need to see for your career and to get more of your time back.

Sign up for a completely free account today!

IN PARTNERSHIP WITH HUBSPOT

LLM traffic converts 3× better than Google search

58% of buyers now start their research in ChatGPT or Gemini, not Google. Most startups aren't showing up there yet.

The ones that are get cited by the AI tools their buyers, investors, and future hires already use. And they convert at 3×.

Download the free AEO Playbook for Startups from HubSpot and get the exact steps to start showing up. Five minutes to read.

The Big Idea

AI Becomes Valuable When It Becomes Systematic

Most people use AI as a tool.

They open it when they need something.

They ask a question.

They get an answer.

They move on.

That model works for small tasks. But it does not compound. The real shift happens when AI becomes part of a system.

A system that:

stores context

applies consistent logic

supports recurring workflows

improves over time

This is what a personal AI agent is. Not a chatbot. A structured layer that sits between your thinking and your execution. It helps you:

break down problems

stress-test decisions

generate structured outputs

iterate faster

In other words, it improves both cognition and output.

The Data

Why AI as a Thinking Layer Matters

Research from McKinsey & Company estimates generative AI could contribute up to $4.4 trillion annually, with a large share coming from knowledge work like analysis, writing, and decision support.

Studies from MIT show that workers using AI complete tasks significantly faster while improving quality, especially in writing and reasoning-heavy tasks.

And experiments from Boston Consulting Group found that AI-assisted professionals perform better on complex tasks involving judgment and problem solving.

The pattern is clear. AI does not just speed up execution. It improves how thinking is structured. And when thinking improves, output follows.

Why Most People Don’t Get This Benefit

The issue is not access. It’s usage. Most people:

ask vague questions

provide little context

accept first outputs

never reuse structure

They treat AI like search. Not like a system. Which means every interaction resets to zero.

No memory.

No refinement.

No compounding.

That is why the gains stay small.

The Framework

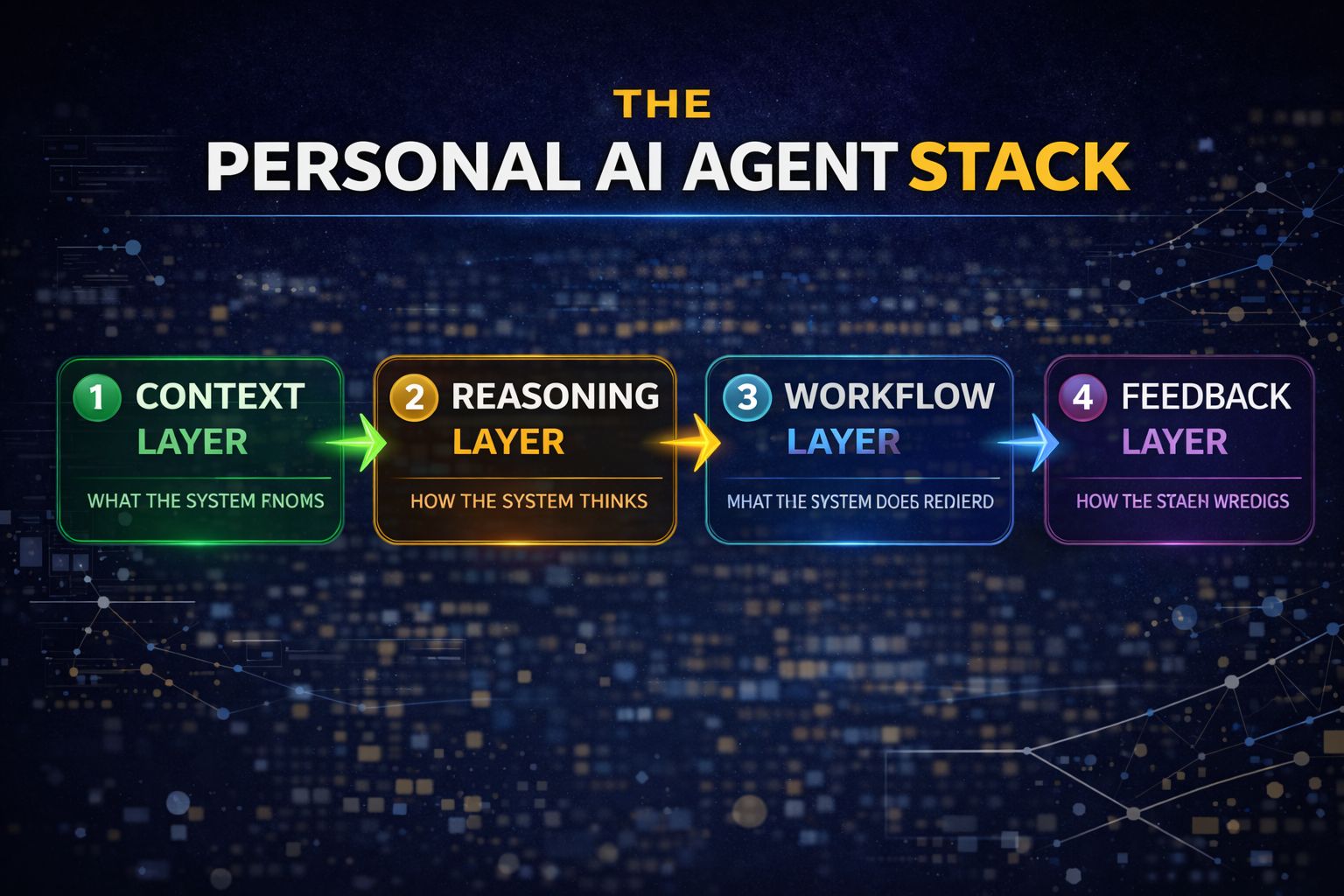

The Personal AI Agent Stack

A useful way to think about a personal AI agent is not as a single tool, but as a layered system.

Each layer solves a different problem. And most people stop at the surface.

They use AI without context.

They prompt without structure.

They generate without refinement.

That’s why the outputs feel inconsistent.

When these four layers work together, the system starts to behave differently. It becomes more predictable, more useful, and more aligned with how you actually think and work.

1. Context Layer

What the system knows

This is the most overlooked layer, and also the most important.

AI without context is generic by default. It does not know your goals, your constraints, or what a “good answer” looks like in your world.

So it gives broadly correct but practically weak responses.

The moment you add context, the quality shifts.

This includes:

your goals and priorities

your role and responsibilities

your business model or domain

your constraints (time, budget, resources)

your preferences (tone, format, decision style)

The goal here is not to provide everything. It is to provide enough signal for the AI to reason within your reality.

What this looks like in practice:

Instead of:

“How should I price this product?”

You say:

“I’m targeting early-stage SaaS founders, pricing between $20–$100/month, with a goal of maximizing conversion over margin. Where is my pricing weak?”

Same model. Different output quality.

Practical tip:

Create a reusable “context block” you paste into key workflows

Keep it concise but specific

Update it as your priorities change

Context is what turns AI from informative to useful.

2. Reasoning Layer

How the system thinks

Once context is clear, the next layer is structure.

Most people ask AI open-ended questions and expect structured thinking in return.

That rarely works.

AI performs significantly better when you define how it should think, not just what it should answer.

This is where structured prompts come in.

You are effectively giving the system a reasoning framework.

Examples:

decision frameworks

analysis templates

evaluation criteria

comparison structures

Instead of asking:

“Is this a good idea?”

You shift to:

“Evaluate this idea across market demand, differentiation, and execution risk. Highlight the top 3 weaknesses and suggest improvements.”

This forces the model to think in a defined way.

Useful reasoning patterns:

Compare options → highlight trade-offs

Stress test → identify risks and assumptions

Break down → simplify complex problems

Prioritize → rank based on clear criteria

Practical tip:

Build a small library of 5–10 “thinking prompts” you reuse:

“Compare A vs B across…”

“What assumptions am I making here?”

“What would a skeptical customer say?”

Over time, this becomes your default thinking system.

3. Workflow Layer

What the system does repeatedly

This is where things start to compound.

A single good prompt is useful.

A repeatable workflow is leverage.

The goal is to identify tasks you do frequently and turn them into structured AI-supported workflows.

Common examples:

idea validation before building

content drafting with a defined structure

summarizing meetings into decisions and actions

breaking down strategies into steps

designing experiments with clear hypotheses

Instead of approaching each task from scratch, you standardize the process.

Example: Idea validation workflow

Define the idea with context

Ask AI to identify weaknesses

Generate alternative approaches

Compare options

Suggest next experiments

Now every idea goes through the same system.

This creates:

consistency in output

faster execution

clearer decision-making

Practical tip:

Start with 3 workflows you already repeat every week.

writing

decision-making

planning

Turn each into a simple, repeatable prompt sequence.

4. Feedback Layer

How the system improves

This is the layer most people skip.

They use AI. They get outputs. They move on.

No refinement. No iteration. No learning.

Which means the system never improves.

The feedback layer is what creates compounding value.

It includes:

refining prompts when outputs are weak

correcting mistakes instead of ignoring them

saving strong outputs for reuse

noticing patterns in what works and what doesn’t

Over time, you start building:

better prompts

clearer instructions

more reliable workflows

What this looks like in practice:

If an output is too vague → tighten constraints

If it misses context → add examples

If it’s inconsistent → break the task into steps

Small improvements stack quickly.

Practical tip:

Save your best prompts in one place

Reuse strong outputs as templates

Iterate instead of restarting

How the Stack Comes Together

Individually, each layer helps.

Together, they change how you operate.

Context makes outputs relevant

Reasoning improves thinking quality

Workflows create consistency

Feedback drives improvement

Most people operate without a stack.

They rely on one-off interactions.

Which is why results feel inconsistent.

The advantage comes from building a system where each interaction builds on the last.

Not because the model changes.

But because your structure does.

What’s Your Take? — Here’s Your Chance to Be Featured in the AI Journal

How are you using AI as a thinking partner rather than just a productivity tool, and what has changed in your decision-making as a result?

We’d love to hear your perspective.

Email your thoughts to: [email protected]

Selected responses will be featured in next week’s edition.

The Real Shift

A personal AI agent is not about doing more with AI.

It’s about thinking better with AI.

Once this stack is in place, something subtle changes.

You stop asking random questions.

You start running structured thinking loops.

And over time, that becomes a real advantage.

Because better thinking leads to better decisions.

And better decisions compound into better outcomes.

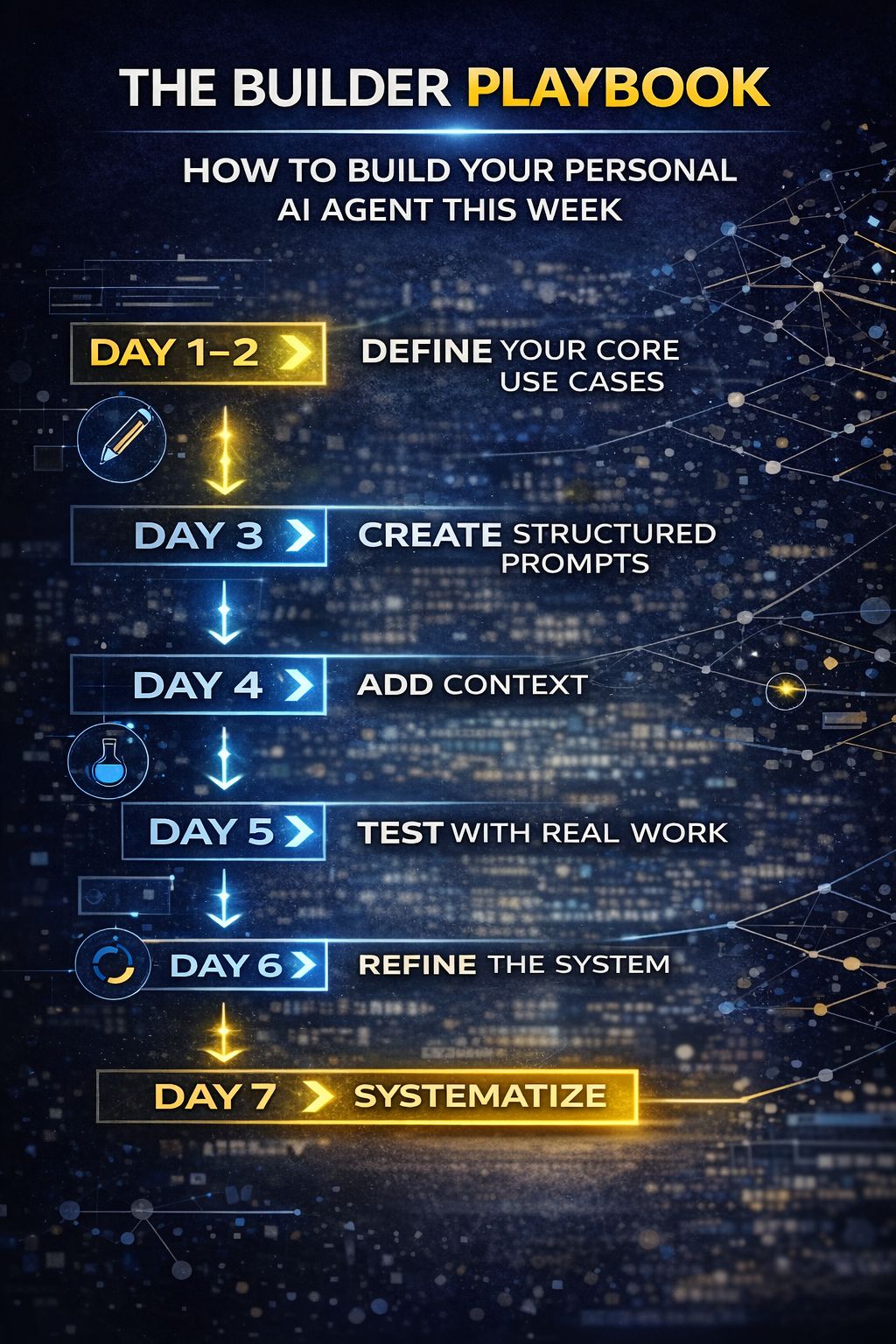

The Builder Playbook

How to Build Your Personal AI Agent This Week

One of the most common mistakes is overcomplicating this.

People assume building a “personal AI agent” requires tools, integrations, or engineering.

In practice, it starts much simpler.

You are not building infrastructure.

You are designing how you think and execute, with AI supporting that system.

This is a seven-day reset. By the end of it, you should have something usable, not perfect.

Day 1–2: Define Your Core Use Cases

Start with the work you already do

The goal is not to invent new workflows. It is to identify the ones that already consume time and attention.

Pick 3 to 5 workflows you repeat every week.

Common examples:

writing (emails, posts, docs)

decision-making (prioritization, trade-offs)

planning (weekly goals, task breakdowns)

analysis (research, synthesis, evaluation)

Focus on frequency, not complexity.

If something happens often, small improvements compound. If it rarely happens, it will not.

What to avoid:

broad categories like “strategy”

one-off tasks

workflows that are not clearly defined

Better framing:

“Draft a LinkedIn post from an idea”

“Break down weekly goals into 5 high-impact tasks”

“Evaluate two product ideas before committing”

Clarity here determines everything that follows.

Day 3: Create Structured Prompts

Turn workflows into repeatable systems

Now take each workflow and turn it into a structured prompt.

This is where most people stay too vague.

The goal is not to ask better questions. It is to define better instructions.

Each prompt should include:

clear objective

relevant constraints

specific output format

Instead of “Help me plan my week”

Use, “Here are my goals for the week. Prioritize them into 5 tasks based on impact and urgency. For each task, define a clear outcome and estimated effort.”

Now the output is usable. If not, refine it.

Practical checklist:

Does the prompt define what “good” looks like?

Does it limit ambiguity?

Does it produce structured output?

Day 4: Add Context

Make the system aware of your reality

At this stage, most prompts still produce generic responses.

Context fixes that.

Document and reuse:

your goals (short-term and long-term)

your audience or users

your constraints (time, resources, priorities)

You do not need a long document.

You need a reusable context block that can be applied across workflows.

Example:

“I run a newsletter for founders focused on practical AI insights. My goal is to increase engagement and retention. I prefer concise, structured content.”

Now every output aligns with your environment. Context is what makes outputs feel tailored instead of generic.

Practical tip:

Keep this in a note

Reuse it across prompts

Update it every few weeks

Day 5: Test with Real Work

Replace theory with actual usage

This is where the system gets real.

Take your actual work from the day and run it through your prompts.

Not examples. Not hypotheticals.

Real inputs.

Observe carefully:

Where does the output feel strong?

Where does it break down?

Where does it miss nuance?

This step is less about success and more about diagnosis. Failures here are useful. They show where the system needs refinement.

What to look for:

vague outputs → prompt lacks constraints

irrelevant suggestions → context is missing

inconsistency → workflow needs structure

Day 6: Refine

Improve the system, not just the output

Now you tighten the system.

Most improvements come from small adjustments:

breaking large prompts into steps

adding clearer instructions

specifying output formats

including examples

Example:

Instead of one prompt:

“Analyze this and suggest next steps”

Break it into:

Extract key insights

Identify risks

Suggest actions

This reduces cognitive load on the model and improves consistency.

Practical mindset:

Do not ask, “Why is this output bad?”

Ask, “What instruction is missing?”

That shift changes how quickly the system improves.

Day 7: Systematize

Turn good prompts into assets

By now, you will have a few prompts that work well.

Do not leave them scattered.

Save them.

Organize them into a simple library:

Writing

Decision-making

Planning

Analysis

Each with 1–2 strong prompts.

This is now your personal AI system.

Not perfect. But usable.

And more importantly, repeatable.

Where This Compounds

The first week creates structure.

The real value comes from repetition.

Over time, something subtle shifts.

You stop approaching problems from scratch.

You start running structured thinking loops.

Instead of:

“What should I do?”

You default to:

define → analyze → compare → decide

This changes how you operate.

You start to notice:

decisions become clearer because trade-offs are explicit

outputs become consistent because structure is reused

execution speeds up because thinking is pre-defined

This is not about speed alone.

It is about reducing randomness in how work gets done.

And that reduction compounds.

Practical Examples

Example 1: Decision Making

Instead of:

“What should I do?”

You use a structured agent prompt:

“Here are 3 options. Compare them across ROI, speed, and risk. Highlight trade-offs and recommend one.”

This improves clarity instantly.

Example 2: Content Creation

Instead of writing from scratch:

You define a structure:

hook

tension

insight

takeaway

Then iterate with AI.

This improves consistency and speed.

Example 3: Weekly Planning

Instead of vague planning:

“Here are my goals. Break them into 5 high-impact tasks with expected outcomes.”

Now planning becomes structured.

Closing Reflection

AI is often framed as a tool for doing more.

More content.

More output.

More speed.

That framing is incomplete. The more durable shift is cognitive. AI changes how quickly you can:

test an idea

challenge an assumption

explore alternatives

structure a decision

In the past, this kind of thinking required time, people, and iteration. Now it can happen in minutes. But only if the system around it is designed intentionally. The people who benefit most will not be those who use AI occasionally.

They will be the ones who build repeatable systems for thinking, not just producing. A personal AI agent is not about replacing your work. It is about upgrading how your work happens.

It sits in a quiet but powerful place:

Between your inputs and your outputs.

Between your questions and your decisions.

Between your ideas and your execution.

And over time, that layer compounds.

Not because the technology is changing.

But because your interaction with it is becoming more structured, more deliberate, and more aligned with outcomes.

That is where the real leverage is.

So the more useful question is not:

“Am I using AI enough?”

It is:

“Is AI improving the quality of my thinking, or just increasing the volume of my output?”

Because only one of those compounds.

—Naseema

Writer & Editor, AIJ Newsletter

Before You Go

Stay ahead of where AI and technology are actually heading, not just where headlines point:

→ Read more insights on The AI Journal and download our 2026 Media Kit.

→ See all our reports and guides, which you can download for free today.

→ Join Premium for exclusive takes on topics emerging and stories developing in AI.

→ Explore broader tech coverage on Silicon Valley Journal.

How are you currently using AI in your work?

That’s all for now. And, thanks for staying with us. If you have specific feedback, please let us know by leaving a comment or emailing us. We are here to serve you!

Join 130k+ AI and Data enthusiasts by subscribing to our LinkedIn page.

Become a sponsor of our next newsletter and connect with industry leaders and innovators.