Hey friends, happy Wednesday.

A lot of career advice around AI is still too vague.

You’ve probably heard some version of this already:

“Learn AI.”

“Use AI tools.”

“Upskill.”

“Adapt.”

That’s directionally right.

But it does not answer the question most people actually have:

What, specifically, becomes more valuable when AI starts handling more of the execution?

That is the question worth unpacking.

Because the labor market is not simply moving toward “less human work.”

It is moving toward a different mix of human work.

The execution-heavy layer is getting compressed.

The leverage layer is getting more important.

And the people who stay relevant will be the ones who understand the difference early.

This matters because the shift is not theoretical anymore. The World Economic Forum says 22% of jobs are expected to be disrupted by 2030, with 170 million new roles created and 92 million displaced. It also finds that nearly 40% of skills on the job are expected to change, while employers continue to cite skill gaps as a major barrier. At the same time, LinkedIn reports that by 2030, about 70% of the skills used in most jobs will have changed, with AI acting as a major catalyst.

So today, I want to break this down in a practical way.

We’ll explore:

why execution-heavy work is becoming easier to automate

which human skills are rising in value

the new skill stack for staying relevant

the behaviors that signal long-term career strength

how different roles should adapt

the “coordination comfort zone” trap

a 90-day plan to become more valuable in an AI-first environment

interview questions and prompts to assess whether you’re actually building future-proof skills

Let’s start with the underlying shift.

— Naseema Perveen

IN PARTNERSHIP WITH TABS

The Architecture Behind AI-Native Revenue Automation

In our new white paper, The Architecture Behind AI-Native Revenue Automation, Tabs CTO Deepak Bapat breaks down what it actually takes to apply AI to revenue workflows without breaking the books.

You’ll learn why probabilistic reasoning isn’t enough for finance, how Tabs pairs LLMs with deterministic logic, and why a unified Commercial Graph is the foundation for scalable, audit-ready automation. From contract interpretation to cash application, this paper goes deep on where AI belongs—and where it absolutely doesn’t.

If you’re evaluating AI for billing, collections, or revenue operations, this is the architecture perspective most vendors won’t show you.

The Real Shift: From Doing the Work to Directing It

The real change is not that humans are disappearing

The simplest way to think about this moment is:

AI reduces the value of routine execution.

It increases the value of high-quality judgment.

That is the pattern showing up across knowledge work.

Microsoft’s 2025 Work Trend Index describes a progression from AI as an assistant, to agents as digital colleagues, to humans increasingly setting direction while agents run parts of workflows and business processes. In that model, the human role shifts upward: less manual production, more orchestration, exception handling, relationship management, and strategic control.

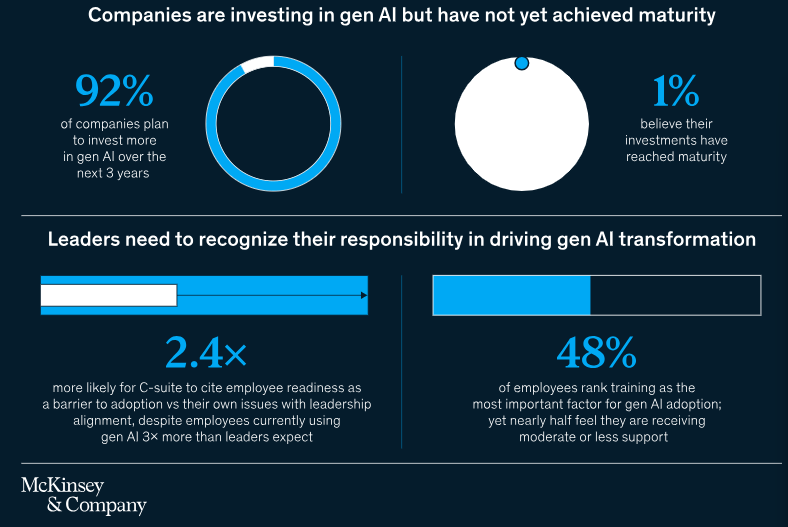

That same pattern is visible in McKinsey’s 2025 workplace research. Nearly all companies are investing in AI, but only 1% describe themselves as mature in deployment. McKinsey’s argument is useful here: the bottleneck is not whether employees can use AI tools. It is whether leaders and organizations can redesign work, align teams, and build systems that turn AI into measurable outcomes.

This is why the most durable career advantage is not “being good at prompts.”

It is being good at the work around the prompt:

framing the right problem

giving the right context

spotting weak logic

judging tradeoffs

making decisions under ambiguity

coordinating people and systems

taking responsibility for outcomes

That is the shift.

And once you see it, a lot of career confusion starts to clear up.

The Wrong Mental Model: “AI Will Replace People”

This framing is too blunt to be useful.

A better framing is:

AI replaces slices of work, not entire careers all at once.

Some slices are more exposed than others.

The most exposed slices usually have three characteristics:

They are repetitive

They are rules-based

They can be evaluated quickly against a known pattern

That includes things like:

first-draft writing

basic research synthesis

formatting and documentation

standard reporting

simple analysis

routine coding support

meeting summaries

operational follow-ups

lightweight customer interactions

That does not mean those jobs vanish overnight.

It means the market begins paying less for people whose value is concentrated in those tasks alone.

This is where many professionals get caught off guard.

They think they are being paid for output volume.

Often, they are actually being paid for one of two things:

solving non-obvious problems

reducing uncertainty for other people

If AI starts doing more of the output layer, then your relevance depends more on whether you can still do those two things.

That is why some people feel threatened by AI, while others feel amplified by it.

The difference is not just tool usage.

It is where their value sits in the workflow.

Skill Categories That Are Becoming More Valuable

The World Economic Forum’s 2025 report is clear on something important: even as demand rises for AI, big data, and cybersecurity skills, human capabilities like analytical thinking, resilience, flexibility, leadership, and collaboration remain critical core skills.

That matches what many operators are seeing in practice.

As execution gets cheaper, the following skill categories become more valuable, not less.

1. Problem framing

This is becoming one of the most important skills in modern work.

AI can generate options quickly.

But it still depends heavily on the quality of the problem definition.

Professionals who stay valuable know how to answer questions like:

What are we actually trying to solve?

What constraints matter here?

What would success look like?

What tradeoff are we willing to make?

What kind of answer is useful versus merely interesting?

This sounds basic.

It is not.

Most weak work starts with weak framing.

And most strong AI-assisted work starts with strong framing.

The person who can define the problem better often creates more value than the person who can execute the task faster.

In practice, this means you should get better at:

turning vague requests into clear problem statements

identifying assumptions before work begins

clarifying decision criteria early

distinguishing symptoms from root causes

AI makes these skills more important because it gives everyone more output. That makes clarity a bigger differentiator.

2. Judgment

If execution is getting commoditized, judgment is becoming premium.

By judgment, I mean the ability to make sound decisions when the answer is not obvious.

This includes:

choosing between good but imperfect options

knowing when the AI output is “good enough” and when it is dangerous

spotting what is missing

weighing speed versus quality

balancing short-term gains against longer-term consequences

This is why I think the biggest career mistake right now is over-identifying with production.

Production matters.

But judgment compounds.

The person who can consistently improve the quality of decisions will become more valuable than the person who simply produces more documents, code, decks, or summaries.

This is especially true in management, product, operations, strategy, sales, marketing, and any role where decisions affect resources, priorities, customers, or risk.

3. Context building

AI is powerful, but it is still context-hungry.

It does better when someone knows how to provide:

business context

customer context

historical context

organizational constraints

domain nuance

edge cases

stakeholder sensitivities

This is one reason experienced professionals remain important.

Not because they can do every task manually.

But because they know what matters.

They know what has been tried before.

They know what will fail politically.

They know what the team can actually implement.

They know what the customer really means.

That kind of context is hard to automate cleanly.

And the ability to package that context clearly is becoming a real advantage.

4. Communication

Not generic communication.

High-leverage communication.

The kind that reduces confusion, drives alignment, and helps groups move.

In an AI-first environment, communication becomes more valuable because work is speeding up.

When output volume rises, noise rises too.

So the people who stand out are the ones who can:

explain complex things simply

write clear recommendations

separate signal from noise

persuade without overcomplicating

bring others along during change

create alignment across teams

This matters even more in a world of human-agent collaboration.

If agents can produce more drafts, analyses, and options, then someone still needs to make sense of them for real humans.

That role becomes increasingly valuable.

5. Exception handling

Routine work is easiest to automate.

Edge cases are harder.

That means people who can navigate ambiguity, contradictions, and unusual conditions become more useful.

This includes professionals who can answer questions like:

What should we do when the system output conflicts with real-world conditions?

When do we override the model?

What matters most in this unusual case?

Who needs to be involved here?

What is the second-order risk?

Exception handling is often where trust is built.

It is also where real expertise shows up.

If your work can be fully reduced to a checklist, AI pressure on that work will increase.

If your work involves nuanced judgment in messy environments, your value may rise.

6. Relationship intelligence

This is one of the most under-discussed career moats.

As AI handles more execution, relationships become more strategic.

Why?

Because trust still drives:

deals

hiring

collaboration

leadership

influence

change management

customer loyalty

People who can build trust, navigate politics, manage stakeholders, and keep teams moving through uncertainty will remain difficult to replace.

You can automate many tasks.

You cannot easily automate durable credibility.

7. Ownership

This one is simple but powerful.

When AI increases output speed, organizations do not just want more output.

They want people who can own results.

Ownership means:

seeing the full workflow, not just your step

identifying issues before they become expensive

pushing work across the finish line

taking accountability for quality

improving the system, not just completing the task

A lot of professionals still operate like task recipients.

The ones who stay relevant increasingly operate like outcome owners.

That difference matters more every year.

The New AI-First Skill Stack

If I had to simplify the modern professional skill stack into three layers, it would look like this:

Layer 1: AI fluency

This is the baseline.

You should know how to use AI tools well enough to increase the speed and quality of your work.

That includes:

prompting clearly

giving context

iterating intelligently

checking outputs

using AI for synthesis, drafting, ideation, comparison, and review

LinkedIn’s 2025 data supports this shift directly: AI literacy is among the fastest-growing skills across regions and functions, and LLM proficiency is also rising, especially in more technical roles.

In other words, AI fluency is no longer a differentiator for long.

It is becoming table stakes.

Layer 2: Decision Quality

This is the leverage layer.

Once AI helps everyone move faster, the differentiator becomes the quality of your thinking.

This includes:

analytical thinking

prioritization

tradeoff clarity

strategic reasoning

risk awareness

synthesis

recommendation quality

This also aligns with the WEF finding that analytical thinking remains one of the most important core skills in the evolving labor market.

Layer 3: Human Leverage

This is the compounding layer.

It is your ability to influence outcomes through people, systems, and trust.

This includes:

leadership

collaboration

communication

coaching

adaptability

resilience

stakeholder management

organizational navigation

The WEF specifically highlights resilience, flexibility, leadership, and collaboration as continuing to matter alongside technical skills.

This is the stack I would build against.

AI fluency gets you in the game.

Decision quality makes you useful.

Human leverage makes you hard to replace.

The Behaviors that Now Signal Career Strength

Let’s make this more concrete.

In an AI-first job market, the strongest professionals increasingly behave like this:

They use AI proactively

They do not wait to be told.

They build it into how they think and work.

They validate, not just generate

They treat outputs as hypotheses.

They pressure-test before sharing.

They improve systems, not just tasks

They ask: can this workflow be redesigned?

Can this be standardized?

Can this become reusable?

They communicate crisply

They reduce ambiguity for others.

They make decisions easier.

They escalate wisely

They know what can be automated, what can be delegated, and what needs human judgment.

They learn in public-facing ways

They can show evidence of adaptation.

Not just courses taken, but workflows changed, results improved, tools adopted, and decisions sharpened.

They build cross-functional literacy

They can talk to product, ops, engineering, marketing, sales, and leadership without getting lost.

This matters because the market increasingly rewards people who can connect dots across systems.

The “coordination comfort zone” trap

One of the biggest risks in the next few years is not just automation.

It is staying stuck in low-leverage coordination work and mistaking it for strategic contribution.

This shows up when someone spends most of their time:

moving information around

managing status updates

organizing meetings

cleaning docs

chasing approvals

summarizing what already happened

keeping projects moving without shaping decisions

Some of that work will always exist.

But too much of it creates vulnerability.

Why?

Because AI is particularly good at compressing information-heavy coordination tasks.

Microsoft’s Work Trend Index is useful here again. The model of work they outline pushes humans away from basic assistance and toward directing agents, managing exceptions, and guiding workflows.

So the question to ask yourself is not:

“Am I busy?”

It is:

“Am I building skills that become more valuable when coordination gets cheaper?”

That is a much better career question.

What this means for different types of professionals

A few examples:

If you are early-career

Do not only optimize for being helpful.

Optimize for learning how decisions get made.

You want exposure to:

problem framing

customer insight

tradeoffs

prioritization

workflow design

feedback loops

You still need execution skills.

But execution alone is a weak moat.

If you are mid-career

This is where the pressure gets real.

A lot of mid-level roles were built around coordination, reporting, and process management.

Those layers may compress.

Your advantage will come from becoming sharper in one or more of these areas:

strategic thinking

system design

stakeholder management

commercial understanding

decision quality

domain expertise

If you are a manager

Your value is moving away from oversight and toward leverage creation.

That means:

redesigning work

helping teams use AI responsibly

coaching judgment

setting better decision frameworks

increasing team quality, not just team speed

If you are a specialist

You need two things:

real depth

translation ability

Depth matters because shallow knowledge is easier to mimic.

Translation matters because specialists who can explain implications clearly become more influential.

What’s Your Take? — Here’s Your Chance to Be Featured in the AI Journal

As AI takes over more execution-heavy work, which human skills and behaviors are becoming more valuable, and what should professionals start building now to stay relevant over the next 3 to 5 years?

We’d love to hear your perspective.

Email your thoughts to: [email protected]

Selected responses will be featured in next week’s edition.

A Practical 90-Day Relevance Plan

If you want to become more valuable in the next three months, here is a practical reset.

Step 1: Audit your current work

List your recurring weekly tasks.

Put each one into one of three buckets:

Routine execution

Decision support

Human leverage

Be honest.

If most of your value sits in routine execution, that is not a moral failure.

It is just a signal.

Now you know where to build.

Step 2: Automate or augment 20 to 30% of your routine work

Pick 2 to 3 recurring tasks where AI can help immediately.

Examples:

first drafts

research summaries

meeting notes

reporting templates

analysis formatting

CRM follow-ups

document cleanup

internal knowledge synthesis

The goal is not just saving time.

The goal is redirecting time into higher-value work.

Step 3: Upgrade one decision skill

Pick one of these and go deep for 30 days:

prioritization

analytical thinking

business writing

stakeholder communication

recommendation writing

experiment design

tradeoff analysis

Do not try to improve everything at once.

One sharp upgrade is better than shallow progress everywhere.

Step 4: Build one reusable system

Create one repeatable workflow that combines:

your judgment

AI support

a clear output

For example:

a weekly market scan with your commentary

a structured customer insight synthesis

an AI-assisted decision memo template

a hiring evaluation workflow

a competitor analysis system

a content briefing engine

a project-risk review process

This matters because systems scale your value more than one-off effort does.

Step 5: Make your thinking visible

This is a big one.

Start showing how you think.

That could be through:

sharper internal memos

clearer recommendations

better written updates

decision summaries

postmortems

framework docs

portfolio work

public writing if appropriate

The market increasingly rewards visible judgment, not just hidden effort.

Interview questions to test if a role is future-aligned

This is useful if you are job searching now.

Ask questions like:

How is AI changing the day-to-day work in this role?

Which parts of this role are becoming more automated?

What higher-value skills are becoming more important on the team?

How do top performers in this role create leverage?

What decisions does this person own versus support?

How much of the role is coordination versus actual problem solving?

How is the team rethinking workflows, not just adding tools?

These questions do two things:

They help you assess the role

They signal that you understand where the market is going

That is useful.

A Self-Audit: Are you becoming more valuable or just more efficient?

A few questions worth asking yourself:

Am I using AI to reduce low-value work?

Am I getting better at judgment, or just faster at output?

Can I clearly explain the problems I solve?

Am I trusted with ambiguity?

Do I improve workflows, or just complete tasks?

Can I influence decisions across people and systems?

Is my value tied to one tool, or to how I think?

If too many of your honest answers point toward output rather than leverage, that is your signal.

Not to panic.

To reposition.

The broader point

A lot of people still talk about AI as if it creates a binary split:

humans win or machines win.

That is not the most useful lens.

A better lens is this:

The value chain of work is being reorganized.

Routine execution is moving down the stack.

Decision quality is moving up the stack.

Human leverage is becoming a bigger multiplier.

That is why the most valuable professionals in the next few years will probably not be the ones who resist AI.

They also will not be the ones who blindly outsource all thinking to it.

They will be the ones who learn how to work with AI while becoming stronger in the distinctly human layers of work:

judgment

context

communication

ownership

trust

adaptability

leadership

That is where the durable advantage is forming.

And the encouraging part is this:

These are learnable skills.

They take practice.

They take repetition.

They take intentional career design.

But they are not reserved for a small elite group.

They are available to anyone willing to shift from task execution toward leverage creation.

Closing Thought

The most common mistake people make in moments like this is assuming the future belongs only to the most technical people.

I do not think that is right.

The future belongs to people who can combine technical fluency with sound judgment and real human effectiveness.

That combination is harder to replace.

And in many organizations, it is still too rare.

So if you are thinking about your career in 2026, I would not ask:

“How do I compete with AI?”

I would ask:

“Which parts of my work are becoming cheaper, and which parts are becoming more valuable?”

That is the more useful question.

Because relevance in an AI-first job market is not about proving you can do everything manually.

It is about becoming the kind of person who can direct better work, make better decisions, and create better outcomes in a world where intelligence is suddenly abundant.

That is the shift.

And the people who understand it early will have a very real advantage.

—Naseema

Writer & Editor, AIJ Newsletter

Before You Go

Stay ahead of where AI and technology are actually heading, not just where headlines point.

→ Read deeper insights on AI Journal

→ Explore broader tech coverage on Silicon Valley Journal

What do you think will matter most in an AI-first job market?

That’s all for now. And, thanks for staying with us. If you have specific feedback, please let us know by leaving a comment or emailing us. We are here to serve you!

Join 130k+ AI and Data enthusiasts by subscribing to our LinkedIn page.

Become a sponsor of our next newsletter and connect with industry leaders and innovators.