Hey friends, happy Monday.

Over the past year, I’ve noticed a pattern in conversations with founders building with AI.

It usually starts the same way.

There’s excitement. A breakthrough moment. A demo that feels like magic.

The model performs.

The outputs are sharp.

The prototype works beautifully in a controlled setting.

And for a while, momentum builds.

But then something quieter happens.

Six months later, revenue hasn’t meaningfully moved. Adoption is uneven. The team is still “experimenting.” The excitement fades into ambiguity.

Not because AI failed.

But because the workflow never carried enough economic weight to justify automation in the first place.

This is the uncomfortable truth most people don’t talk about.

Most AI ideas are technically feasible.

Very few are economically meaningful.

We’ve entered a world where building is easier than ever. Models are powerful. APIs are accessible. Infrastructure is abundant.

But abundance creates a new bottleneck.

Judgment.

Specifically, the judgment to decide which workflows deserve automation and which ones are distractions dressed up as innovation.

In my experience, the difference usually comes down to one question:

Is this workflow worth at least $10K?

Not in theory.

Not in future upside.

Not in slide-deck potential.

In actual economic friction.

If you improved this workflow by 30 percent, would revenue move? Would costs meaningfully decline? Would risk decrease in a measurable way?

If the answer is vague, that’s a signal.

If the answer is clear, you might have something.

This week’s edition is about building that instinct.

We’ll unpack:

The Economic Friction Test and how to apply it honestly

The 4 Filters of a $10K Workflow

A practical scoring model you can run in under an hour

Real examples of workflows that cross the threshold

A fast validation playbook to avoid wasting six months

And why most AI ideas fail quietly, even when the tech works

The goal is simple.

To stop building impressive AI features.

And start building economically meaningful ones.

Let’s start with the core principle.

— Naseema Perveen

IN PARTNERSHIP WITH HUBSPOT

Turn AI into Your Income Engine

Ready to transform artificial intelligence from a buzzword into your personal revenue generator?

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

The Big Idea

Here’s the shift that took me a while to internalize.

AI does not create value because it is intelligent. Plenty of intelligent systems never turn into meaningful businesses. AI creates value when it removes expensive friction.

That sounds simple, but it reframes how you evaluate every idea.

The strongest AI products rarely start with a model demo. They start with a workflow that is already painful — and already tied to money.

Someone is spending too much time on something repetitive.

Revenue is slipping because decisions are inconsistent.

Deals are lost because signals are buried.

Costs are rising because judgment does not scale.

That is where AI belongs.

When a workflow is:

frequent enough that small gains compound

expensive in terms of labor, delay, or lost opportunity

manual, stitched together by people and spreadsheets

judgment-heavy, where nuance matters more than rules

there is usually revenue hiding inside it.

Not theoretical revenue. Embedded revenue. The kind that shows up when friction is reduced.

On the other hand, there are workflows that look attractive but rarely monetize well.

They are occasional.

They are cosmetic.

They are loosely defined.

They are not directly tied to measurable outcomes.

These are the ones that produce impressive demos and underwhelming businesses.

You can spend months automating something that saves a few hours per week and feel productive the entire time. But if that workflow was never materially costly, the economic impact will remain small.

The fastest way to waste a year in AI is to automate something that was never costing enough to matter.

This is why I’ve come to believe the real skill in this era is not building AI systems.

It is spotting economic friction before everyone else does.

Once you see clearly where money is already leaking, delayed, or constrained, the technology becomes a tool.

And tools applied to leverage are what create durable value.

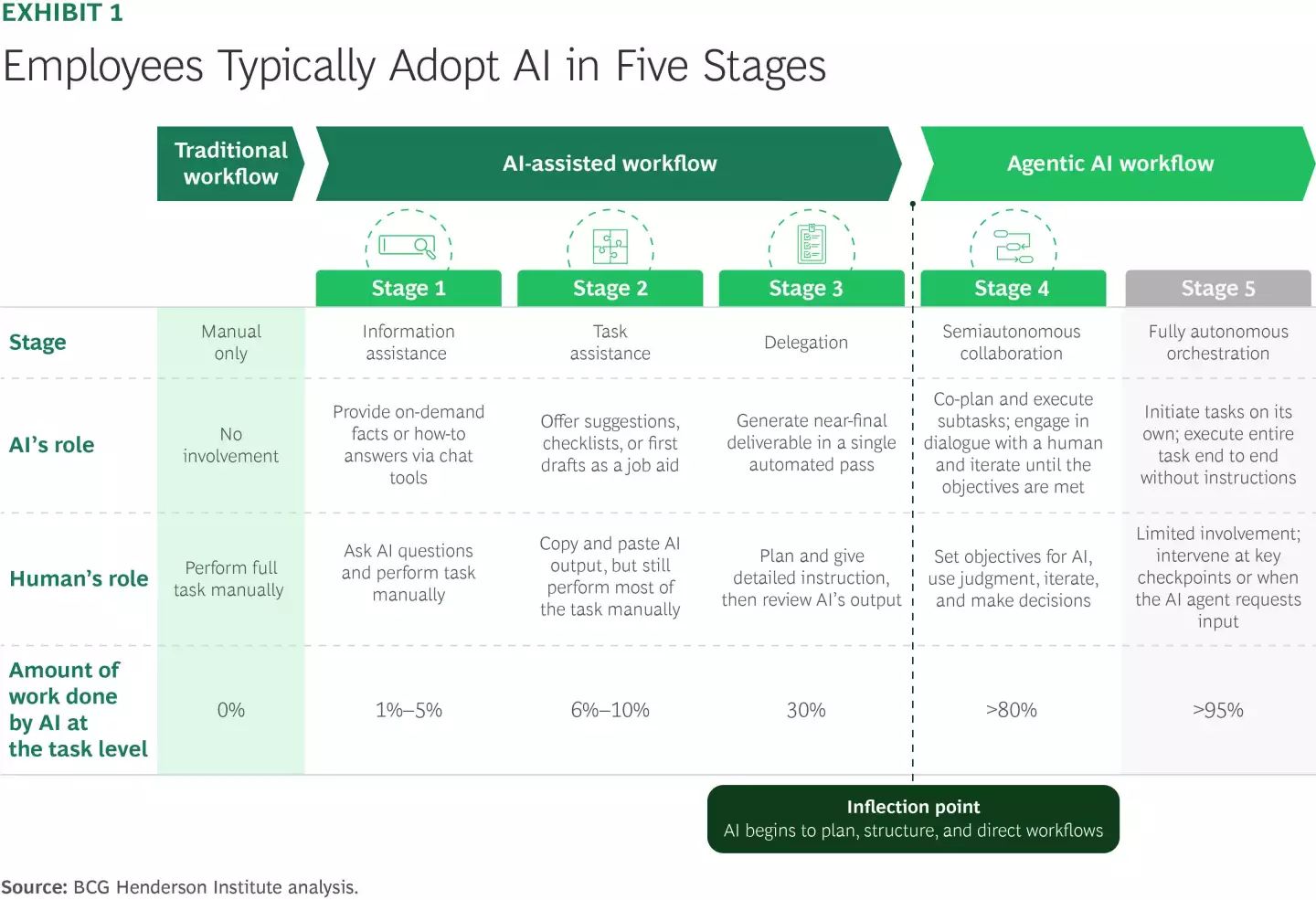

The Data

Why Most AI Projects Don’t Scale

Let’s ground this.

Research from Boston Consulting Group suggests that roughly 70% of AI initiatives fail to scale beyond pilot. The issue is rarely model performance. It is economic alignment.

McKinsey & Company reports that companies capture the most AI value in functions tied directly to revenue or major cost centers: marketing, sales, customer operations, supply chain.

AI Improves Output — But Only Where It Matters

An experiment highlighted by Harvard Business School and Boston Consulting Group found that consultants using AI:

• completed tasks 25% faster

• produced 40% higher-quality outputs

However, the strongest gains appeared in tasks involving judgment, analysis, and decision-making, not routine automation.

Enterprise Leaders Are Prioritizing ROI Over Experimentation

According to reporting from Deloitte and Financial Times, enterprises are shifting focus from AI experimentation to measurable returns.

Executives are increasingly asking:

• Where does AI reduce cost meaningfully?

• Where does it increase revenue directly?

• Which workflows justify sustained investment?

This shift is leading companies to cut low-impact AI initiatives and double down on high-leverage workflows.

In other words, value clusters around money.

Not novelty.

When AI is applied to:

lead qualification

pricing optimization

customer churn prediction

sales enablement

operational bottlenecks

the impact is measurable.

When AI is applied to:

“nice to have” summaries

light personalization

loosely defined dashboards

it struggles to convert.

The pattern is clear:

AI wins where friction is expensive.

The Framework

The 4 Filters of a $10K Workflow

Before committing to any AI initiative, it is worth slowing down.

The default instinct is to ask, “Can this be automated?”

The better question is, “Should this be automated?”

Technical feasibility is no longer the bottleneck. Most workflows can be automated in some form. The constraint is economic relevance.

To separate promising opportunities from distractions, every AI idea should pass through four filters. They are simple in structure, but rigorous when applied honestly.

These filters force clarity around leverage, not just capability.

They help eliminate ideas that are technically interesting but economically weak.

And they redirect focus toward workflows where automation can translate into measurable outcomes.

1. Frequency

Does This Happen Often Enough to Compound?

The first filter is almost boring.

How often does this workflow actually happen?

Daily?

Weekly?

Across dozens or hundreds of customers?

Repetition is leverage.

If something happens once a quarter, even perfect automation will struggle to justify meaningful investment. The math simply does not work.

But if a workflow happens 200 times per week, small improvements start to matter. A 10 percent efficiency gain compounds quickly. A 2 percent improvement in accuracy becomes visible over time.

This is where many AI ideas quietly fail. They solve edge cases, not core loops.

To pressure-test frequency, I usually ask three questions:

How many times per week does this occur?

How many people touch it?

How much time does each occurrence require?

Then multiply it out.

You will often discover that what felt minor is actually consuming significant time. Or the opposite — that something that felt painful only happens occasionally.

Frequency turns intuition into numbers.

2. Financial Impact

Is Money Directly Connected to This Workflow?

This filter forces clarity.

Does this workflow directly affect revenue, cost, risk, or retention?

If the answer is vague, monetization will likely be vague too.

Strong AI opportunities tend to sit near financial levers:

pricing decisions

sales conversion

customer qualification

onboarding flows

support escalation

compliance checks

In these areas, even small improvements translate into measurable impact.

For example, improving lead qualification accuracy by 15 percent can shift conversion rates. Reducing onboarding friction can increase retention. Flagging compliance issues earlier can reduce downstream risk.

On the other hand, weak AI opportunities often sit near internal convenience:

cosmetic formatting

minor productivity tweaks

tools that save time but do not influence outcomes

There is nothing wrong with these. They can improve quality of life.

But they rarely build businesses.

Value concentrates where money moves. That is not philosophical. It is structural.

If you cannot draw a clear line from workflow improvement to financial outcome, you should pause.

3. Judgment Complexity

Is This Task Hard to Codify With Rules?

This is where AI earns its place.

If a workflow is fully rule-based — deterministic, binary, predictable — traditional automation may outperform AI. It will be cheaper, more stable, and easier to maintain.

AI shines when tasks require interpretation.

When the inputs are messy.

When context matters.

When prioritization requires nuance.

Examples include:

interpreting customer intent

classifying ambiguous support tickets

summarizing complex conversations

identifying risk signals across large datasets

detecting patterns that are not obvious

These tasks are judgment-heavy. And judgment is expensive to scale with humans alone.

When you combine judgment complexity with financial impact, you often have something interesting.

The key insight here is that monetizable AI opportunities tend to sit where rules break down.

If a problem is easily solved with if-then logic, it is probably not a high-leverage AI use case.

4. Pain Visibility

Does Anyone Care Enough to Pay?

This filter is less technical and more political.

Do stakeholders feel this problem?

Is it discussed in meetings?

Do people complain about it?

Is leadership asking for improvement?

Budget flows toward visible friction.

If the workflow fails silently, it will struggle to command attention or resources.

The strongest AI opportunities usually have clear symptoms:

sales complains about lead quality

support teams are overwhelmed

operations miss SLAs

leadership lacks clarity on performance

When pain is visible, urgency exists. When urgency exists, budgets follow.

Invisible problems rarely get funded, no matter how elegant the solution.

The Scoring Model

Turning Intuition Into Discipline

To make this practical score each workflow across the four filters:

Frequency

Financial impact

Judgment complexity

Pain visibility

Rate each from 1 to 5.

Then add the total.

Anything scoring above 15 is worth serious exploration. It does not guarantee success. But it signals leverage.

Anything below 10 is likely a distraction.

This scoring model is not scientific. It is directional.

Its real value is forcing trade-offs.

Instead of chasing five ideas at once, you prioritize the one that sits at the intersection of repetition, money, complexity, and urgency.

Over time, this discipline compounds.

Because the difference between a technically impressive AI feature and a revenue-generating AI product is rarely the model.

It is where you choose to apply it.

And choosing well is the real edge.

Real Examples of $10K Workflows

Let’s make this concrete.

Example 1: Sales Call Review

Problem: Sales leaders cannot review all calls.

Frequency: High

Impact: High (conversion rates)

Judgment: High

Pain: Visible

AI can:

analyze transcripts

score objection handling

detect churn signals

surface coaching moments

Revenue upside is measurable.

Example 2: Pricing Experimentation

Problem: Pricing decisions rely on slow A/B cycles.

Frequency: Moderate

Impact: High

Judgment: High

Pain: Strategic

AI can simulate pricing scenarios, analyze elasticity signals, and surface patterns in historical conversions.

This affects revenue directly.

Example 3: Internal Status Summaries

Problem: Weekly reports take time to write.

Frequency: Moderate

Impact: Low

Judgment: Low

Pain: Mild

This is useful.

But not necessarily a $10K workflow.

It may save time, but rarely unlocks serious revenue.

What’s Your Take? — Here’s Your Chance to Be Featured in the AI Journal

In your experience, what separates an AI idea that actually generates revenue from one that stays stuck at the demo stage?

We’d love to hear your perspective.

Email your thoughts to: [email protected]

Selected responses will be featured in next week’s edition.

The Builder Playbook

How to Identify a $10K Workflow This Week

One thing I’ve learned building and evaluating AI ideas is that clarity rarely comes from brainstorming.

It comes from structured pressure.

If you want to find a workflow worth automating, you do not need a whiteboard session. You need disciplined friction mapping.

Here’s a practical exercise you can run this week. It’s simple. It’s uncomfortable. And it usually surfaces one or two workflows that matter far more than the rest.

Step 1: List 10 Repetitive Workflows

Do Not Filter. Just Observe.

Start by writing down ten workflows that happen repeatedly across your organization.

Look across:

sales

marketing

operations

customer success

product

Avoid strategy language. Focus on tasks.

Not “improve onboarding.”

Instead: “review onboarding tickets.”

Not “optimize pricing.”

Instead: “analyze discount requests.”

You are looking for loops, not ideas.

At this stage, resist the urge to judge. Capture reality first.

The goal is to surface where time and attention are actually being spent.

Step 2: Attach Numbers

Turn Activity Into Economics

Now the uncomfortable part.

For each workflow, estimate:

hours per week spent on it

number of people involved

revenue touched directly or indirectly

cost of delay if the workflow fails or slows down

The math does not need to be precise. It needs to be directional.

Even rough estimates shift perception.

Something that felt minor might suddenly reveal 40 combined hours per week across the team.

Something that felt strategic might barely touch revenue at all.

This step forces translation.

From task → time

From time → cost

From cost → leverage

Most AI ideas collapse or strengthen right here.

Step 3: Apply the 4 Filters

Pressure-Test the Survivors

Now bring in the framework.

For each workflow, score:

Frequency

Financial impact

Judgment complexity

Pain visibility

Be honest.

You will likely see one or two workflows clearly stand out.

Remove anything that scores weakly. Not because it is useless, but because focus compounds.

One strong workflow is worth more than five average ones.

Step 4: Interview the People Inside the Friction

This Is Where the Real Insight Lives

Before building anything, talk to the people closest to the workflow.

Ask questions that surface friction, not opinions:

What part of this process is most frustrating?

Where do delays typically occur?

Where do errors happen most often?

What does a bad outcome actually cost?

This is often where the real leverage appears.

You might discover that the bottleneck is not where you assumed. Or that the real pain is downstream, not upstream.

These conversations also surface language — the exact phrases people use to describe the friction. That language becomes invaluable when designing the solution later.

Step 5: Prototype in 48 Hours

Test the Core Hypothesis Quickly

Only after you have mapped friction should you test automation.

Use browser-based AI tools.

Upload real historical data.

Run 20–30 examples.

Observe:

Where does the model perform reliably?

Where does it break?

Are failures manageable?

The question is not perfection.

The question is viability.

If reliability does not cross a practical threshold, kill it early.

Do not rescue it with infrastructure.

If it works directionally — if it clearly reduces friction in a measurable way — deepen it.

Add structure. Add evaluation. Add guardrails.

But only after the economic case is visible.

Why This Playbook Works

What I like about this approach is that it removes ego from AI exploration.

You are not chasing what feels impressive.

You are following friction.

You are following money.

And you are testing quickly enough that you do not sink months into something that never had the leverage to begin with.

The goal is not to automate more workflows.

It is to identify the one workflow where improvement changes outcomes.

That is the difference between building AI features and building AI businesses.

Where Founders Go Wrong

I’ve seen this play out enough times that the pattern is hard to ignore.

A founder discovers a powerful model capability. It can summarize. Classify. Generate. Analyze. The demo feels like the future.

And that’s usually where things drift.

Not because the technology is weak.

Because the economic reasoning is thin.

There are four common traps.

1. Chasing Novelty Instead of Friction

Novelty is seductive.

A clever use of AI feels like progress. It signals innovation. It earns attention internally.

But novelty rarely pays.

Friction pays.

The difference is simple. Novelty is about what AI can do. Friction is about what the business is already struggling with.

If you build something impressive that nobody urgently needs, adoption will be slow and monetization slower.

If you solve a workflow that is actively painful, people will pull the solution toward them.

That pull is what you want.

2. Building Infrastructure Before Validating Value

This one is subtle.

Founders assume that if they are serious about AI, they need architecture. Pipelines. Monitoring. Evaluation systems. Guardrails.

All of that is important.

But only after the workflow proves economically meaningful.

I’ve watched teams spend months building robust infrastructure around a workflow that barely moved revenue.

They built the system beautifully. They just built it around the wrong problem.

Value should precede infrastructure.

Reliability matters. But only once the economic case exists.

3. Optimizing Small Internal Workflows

Internal productivity gains feel safe.

Automating summaries. Cleaning spreadsheets. Generating reports.

These improvements are real. They reduce friction at the edges.

But they rarely transform economics.

There is a big difference between saving five hours per week and increasing conversion by three percent.

Both feel productive.

Only one compounds meaningfully.

The mistake is confusing internal efficiency with strategic leverage.

4. Assuming Automation Equals Monetization

This is perhaps the most common misunderstanding.

Automation does not guarantee revenue.

It guarantees efficiency.

Revenue requires alignment.

If a workflow is not tightly connected to revenue, cost, risk, or retention, automation alone will not create monetization.

You can automate something perfectly and still fail to capture economic value.

Economic leverage, not automation itself, is the driver.

And leverage lives where money already moves.

The Compounding Advantage

Over the next few years, something predictable will happen.

Models will improve for everyone.

APIs will commoditize.

Access will equalize.

The technological playing field will flatten.

What will not flatten as quickly is judgment.

Specifically:

your understanding of which workflows truly matter

your iteration history on high-impact problems

your accumulated knowledge of where models fail

your economic intuition about what moves revenue

These assets are not downloadable.

They are built.

When a founder repeatedly identifies and validates $10K workflows, something interesting happens.

They stop chasing ideas.

They start spotting leverage.

They begin to see friction in economic terms.

That shift compounds.

Not because they use AI more.

But because they apply it with sharper allocation.

Over time, that allocation edge becomes a business advantage.

Closing Reflection

The future of AI product building will not belong to the teams with the flashiest demos.

It will belong to the teams that understand friction deeply.

Every business is full of inefficiencies.

Most of them are small.

Some are cosmetic.

Many are not worth solving.

But a few are quietly expensive.

They slow down revenue.

They create churn.

They introduce risk.

They drain capacity.

Your job is not to automate everything.

Your job is to identify the workflows where money is already leaking.

Fix those.

Automate those.

Monetize those.

If you want to build an AI product that actually matters, start with this question:

What workflow in your business is frequent, expensive, judgment-heavy, and painfully visible?

That is not just an automation opportunity.

That is your $10K opportunity.

And that is where leverage lives.

—Naseema

Writer & Editor, AIJ Newsletter

Before You Go

Stay ahead of where AI and technology are actually heading, not just where headlines point.

→ Read deeper insights on AI Journal

→ Explore broader tech coverage on Silicon Valley Journal

When evaluating an AI idea, what do you prioritize first?

That’s all for now. And, thanks for staying with us. If you have specific feedback, please let us know by leaving a comment or emailing us. We are here to serve you!

Join 130k+ AI and Data enthusiasts by subscribing to our LinkedIn page.

Become a sponsor of our next newsletter and connect with industry leaders and innovators.