👋 Hey friends, happy Friday.

Today’s topic is a little closer to my heart than usual.

Over the past two years, most of the AI conversation has centered around a single theme:

Faster.

Cheaper.

More efficient.

More scalable.

And in many ways, that’s been true. AI is compressing cycle times, accelerating decision-making, and helping teams ship more than ever.

But there’s an interesting second-order effect that’s easier to miss.

As we remove friction from work, we may also be changing how work feels.

Less repetition is good.

Less coordination overhead is good.

Less busywork is good.

But when effort becomes invisible, something subtle shifts.

This isn’t an argument against automation. It’s a question worth exploring:

If AI handles more of the cognitive heavy lifting, where does mastery live? Where does ownership show up? What does growth look like?

Today, I want to explore:

What automation might be doing to motivation and identity

Where this shift is showing up most clearly

The opportunity this creates for founders

And how to design AI-native systems that amplify humans instead of sidelining them

Let’s dig in.

— Naseema Perveen

IN PARTNERSHIP WITH WISPR FLOW

Investor-ready updates, by voice

High-stakes communications need precision. Wispr Flow turns speech into polished, publishable writing you can paste into investor updates, earnings notes, board recaps, and executive summaries. Speak constraints, numbers, and context and Flow will remove filler, fix punctuation, format lists, and preserve tone so your messages are clear and confident. Use saved templates for recurring financial formats and create consistent reports with less editing. Works across Mac, Windows, and iPhone. Try Wispr Flow for finance.

The Data: What Automation Is Actually Doing to Work, Motivation, and Identity

Before we analyze the psychology, let’s ground this in numbers.

AI is not just accelerating workflows. It is restructuring how cognitive labor is distributed.

1️⃣ The Scale of Cognitive Automation

McKinsey & Company estimates that generative AI could automate tasks representing up to 30 percent of hours worked across the US economy by 2030. Critically, the majority of this impact is concentrated in:

Knowledge work

Professional services

Managerial and technical roles

This is different from previous automation waves. The Industrial Revolution automated muscle. The software revolution automated coordination. The AI wave is automating judgment-adjacent tasks.

That includes:

Drafting content

Synthesizing research

Writing code

Producing legal summaries

Generating financial analysis

We are not removing labor at the edges. We are automating the cognitive core.

2️⃣ The Productivity Gains Are Real

MIT and Stanford University conducted a large-scale field experiment in customer support environments and found that generative AI tools:

Increased productivity by 14 percent on average

Boosted productivity of lower-skilled workers by up to 35 percent

Improved customer sentiment scores

Similarly, research from Harvard Business School shows that consultants using AI assistance:

Completed tasks 25 percent faster

Produced higher quality outputs

Narrowed performance gaps between top and bottom performers

The first-order effect is clear: AI lifts output and compresses variance.

But that compression has implications.

When performance gaps narrow and baseline competence rises, differentiation shifts from output volume to judgment quality.

3️⃣ Engagement Is Not Improving at the Same Rate

Now look at engagement.

Gallup reports that global employee engagement remains around 23 percent, and in some regions it has declined post-2022 despite digital productivity gains.

Burnout indicators remain high, particularly in:

Technology

Professional services

Customer-facing roles

Meanwhile, Deloitte research on digital transformation shows that organizations focusing purely on efficiency metrics report:

Higher short-term performance gains

Lower long-term employee satisfaction

Increased turnover risk in knowledge roles

In other words:

Productivity is rising.

Engagement is not rising proportionally.

That gap matters.

4️⃣ What Motivation Science Tells Us

Decades of research in motivation psychology, particularly from Edward Deci and Richard Ryan, show that intrinsic motivation depends on three needs:

Autonomy

Competence

Relatedness

AI changes autonomy by:

Recommending actions

Predicting decisions

Framing options

AI changes competence by:

Generating first drafts

Reducing trial-and-error

Compressing skill-building cycles

AI changes relatedness by:

Replacing some human-to-human collaboration with human-to-model interaction

If competence is partially outsourced to the system, and autonomy is guided by recommendations, the psychological architecture of work shifts.

This is not necessarily harmful. But it is not neutral.

5️⃣ Skill Atrophy Risk

There is growing academic discussion around skill atrophy in AI-augmented environments.

Research in aviation and medicine has shown that high automation environments can:

Reduce manual skill retention

Increase over-reliance on automated systems

Decrease situational awareness

The same dynamic may apply in knowledge work.

If junior engineers rely heavily on AI-generated code, their exposure to edge cases decreases. If marketers rely entirely on AI ideation, their ability to generate original positioning may weaken over time.

This is not immediate collapse. It is gradual erosion.

And gradual erosion is harder to detect.

6️⃣ The Identity Variable

There is another, less quantified but emerging dimension: professional identity.

A study from PwC found that workers who perceived AI as a collaborator rather than a replacement reported:

Higher job satisfaction

Greater optimism about future skill relevance

Lower anxiety around automation

The framing matters.

When AI is positioned as:

A tool that extends capability → confidence rises

A system that replaces expertise → defensiveness increases

Meaning and identity are not soft metrics. They influence retention, innovation, and long-term performance.

The Pattern

The data shows three simultaneous truths:

AI significantly increases productivity.

Engagement and meaning do not automatically increase with productivity.

Motivation depends on autonomy, competence, and ownership, all of which automation reshapes.

That tension is the foundation of this entire edition.

If AI removes friction but also reduces visible effort, we risk optimizing output while quietly destabilizing motivation.

The next generation of AI-native companies will need to design not only for efficiency, but for psychological sustainability.

And that is where the real opportunity begins.

The Psychological Shift Nobody Talks About

Automation used to replace physical effort.

Factories automated lifting.

Software automated paperwork.

Cloud tools automated coordination.

Now, AI automates cognition.

It writes.

It analyzes.

It reasons.

It drafts.

According to McKinsey & Company, up to 30 percent of global work hours could be automated by 2030, with knowledge work absorbing most of the impact.

That includes:

Marketing

Engineering

Legal

Finance

Product

Customer support

The shift is subtle but profound.

People are spending less time creating from scratch and more time reviewing what AI has generated.

The difference appears minor. It is not.

There is a meaningful psychological gap between:

Building a slide deck

Editing a deck generated in fifteen seconds

Between:

Writing the code

Accepting a suggested diff

Between:

Solving the problem

Confirming the model’s answer

Over time, this alters how work feels.

Research from Gallup consistently links engagement to autonomy, mastery, and visible progress. Meanwhile, Self-Determination Theory developed by Edward Deci and Richard Ryan shows that intrinsic motivation depends on:

Autonomy

Competence

Purpose

Automation changes all three.

If the system performs most of the heavy lifting:

Where does mastery accumulate?

Where does visible progress come from?

Where does effort sit?

This is the tension at the center of AI-native work.

Humans do not only want productivity. They want development. And development requires challenge.

Not waste. But challenge.

Industry Deep Dive

Let’s examine where the emotional cost is emerging most visibly.

1️⃣ Customer Support

AI systems now resolve 60 to 80 percent of Tier 1 tickets in many enterprise environments. Platforms like Intercom, Ada, and Forethought handle repetitive queries with increasing precision.

On paper:

Faster response times

Lower operational costs

Higher CSAT scores

But the composition of human work changes.

Support agents now disproportionately handle:

Escalations

Angry customers

Complex edge cases

They lose the quick wins. The manageable tasks that generate daily momentum.

Instead of solving forty small problems, they solve three emotionally charged ones.

Efficiency improves.

Emotional load increases.

The startup opportunity is not more automation. It is emotional instrumentation.

Imagine support dashboards that track:

Customer gratitude signals

Resolution impact

Agent recovery cycles

Positive feedback density

Meaning in support often comes from small victories. Current metrics overlook them.

2️⃣ Software Engineering

Developers increasingly rely on tools such as GitHub Copilot, Replit AI, and Cursor.

The gains are real:

Less boilerplate

Faster debugging

Shorter development cycles

But something subtle shifts.

Flow used to emerge from wrestling with complexity. Now it often emerges from steering suggestions.

Craft becomes curation.

Engineering identity has historically been tied to authorship and problem-solving. When the first draft is machine-generated, authorship can feel diluted.

The opportunity lies in tools that:

Expose reasoning chains

Visualize human edits versus AI baseline

Attribute judgment calls

Highlight intellectual fingerprints

Making invisible contribution visible may become a core feature, not a cosmetic one.

3️⃣ Marketing and Creative Work

AI can now draft campaigns, headlines, positioning statements, and visuals instantly.

Brainstorming once required:

Whiteboards

Iteration

Dead ends

Rewrites

Friction forced originality. Constraints shaped voice.

Now, a prompt produces five directions in seconds.

The risk is not necessarily lower quality. It is flattened identity.

When creative cycles compress too aggressively:

Depth shrinks

Incubation disappears

Voice homogenizes

The opportunity is not slower AI. It is better design.

Creative platforms that:

Encourage iteration before publishing

Track evolution of ideas

Surface human influence in final output can preserve both speed and depth.

The Core Tension — Efficiency vs Meaning

Here is the paradox:

The more work AI performs, the less visible effort humans exert.

The less visible effort humans exert, the less visible growth they experience.

Humans interpret effort as progress.

Effort signals learning.

Learning signals mastery.

Mastery signals identity.

AI is optimized to remove effort.

So the real product design question becomes:

What friction should we preserve?

Not all friction is waste.

Some friction builds skill.

Some friction builds judgment.

Some friction builds confidence.

The companies that treat all friction as inefficiency may unintentionally erode the very motivation that drives long-term excellence.

What’s Your Take? — Here’s Your Chance to Be Featured in the AI Journal

As AI takes over more cognitive work, how should leaders design systems that preserve mastery and ownership?

We’d love to hear your perspective.

Email your thoughts to: [email protected]

Selected responses will be featured in next week’s edition.

Startup Opportunities in Emotional Infrastructure

We have optimized for output. The next layer is optimizing for significance.

Here are four practical opportunity areas.

1️⃣ Meaning Analytics Platforms

Instead of measuring only:

Tasks completed

Tickets closed

Lines of code

Measure:

Skill growth trajectories

Judgment contribution

Creative ownership

Improvement over AI baseline

In an AI-native world, showing humans their development becomes strategically critical.

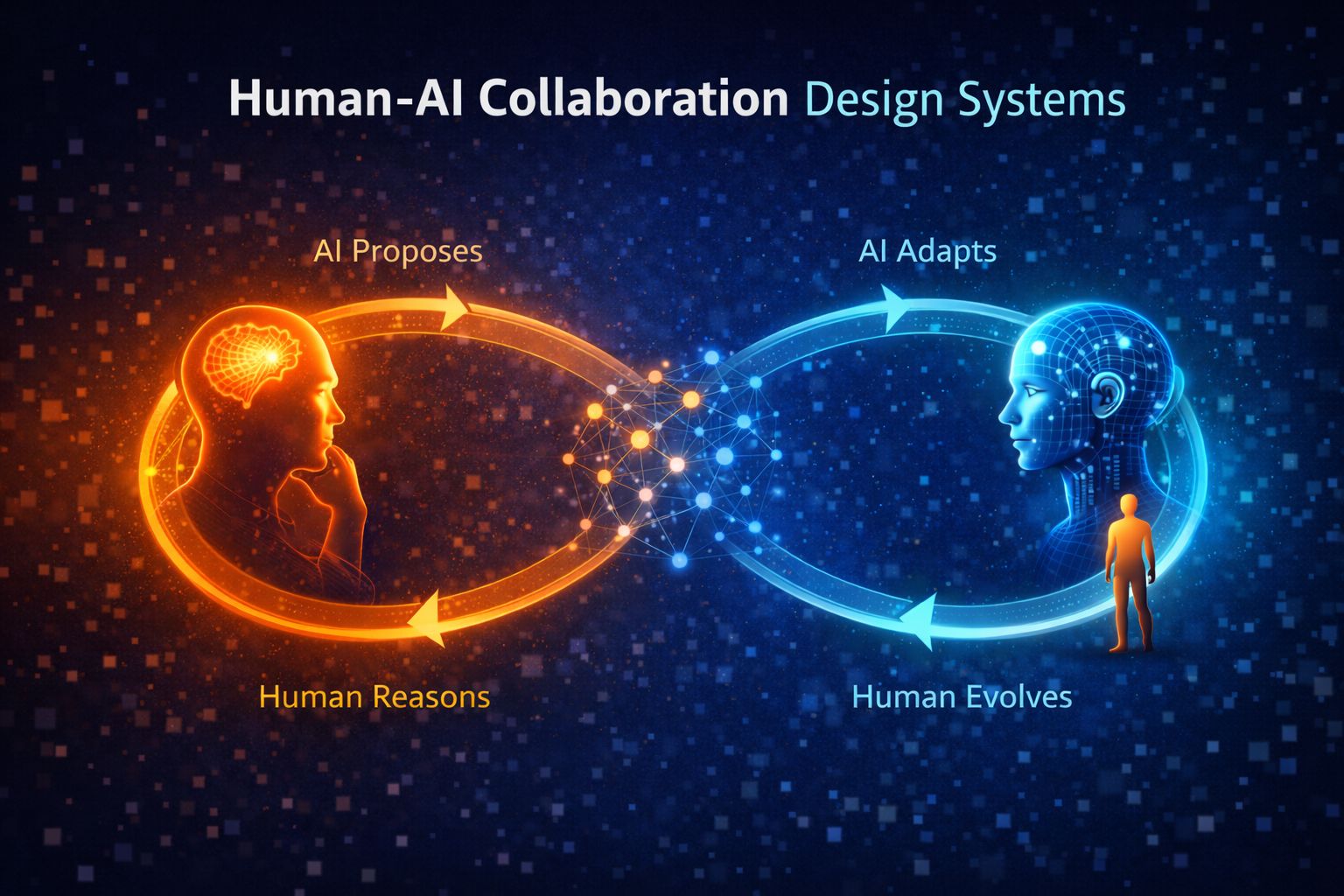

2️⃣ Human-AI Collaboration Design Systems

Move beyond:

AI replaces → Human reviews

Design for:

AI proposes → Human reasons → AI adapts → Human evolves

Log:

Why overrides occurred

What nuance was added

Where values shaped outcomes

That collaboration layer becomes your defensibility.

3️⃣ Skill Amplification Engines

Automation does not need to eliminate challenge. It can structure it.

Design systems that:

Gradually reduce scaffolding

Increase complexity over time

Provide feedback loops that teach

Think of automation as training equipment rather than autopilot.

4️⃣ Identity-Preserving Workflows

Every role carries narrative identity.

Engineers build

Designers create

Marketers tell stories

AI-native tools should reinforce that identity.

Language matters.

Instead of “AI wrote this,” surface “You guided this.”

Subtle framing influences ownership, and ownership influences motivation.

The Founder Playbook

Building AI-native companies is not just a technical challenge. It is a philosophical one.

Most founders default to one metric: efficiency.

But in an environment where intelligence is abundant and automation is increasingly commoditized, efficiency alone will not create durable advantage.

Designing AI that amplifies humans requires discipline, intentional friction, and long-term thinking.

Here’s the expanded playbook.

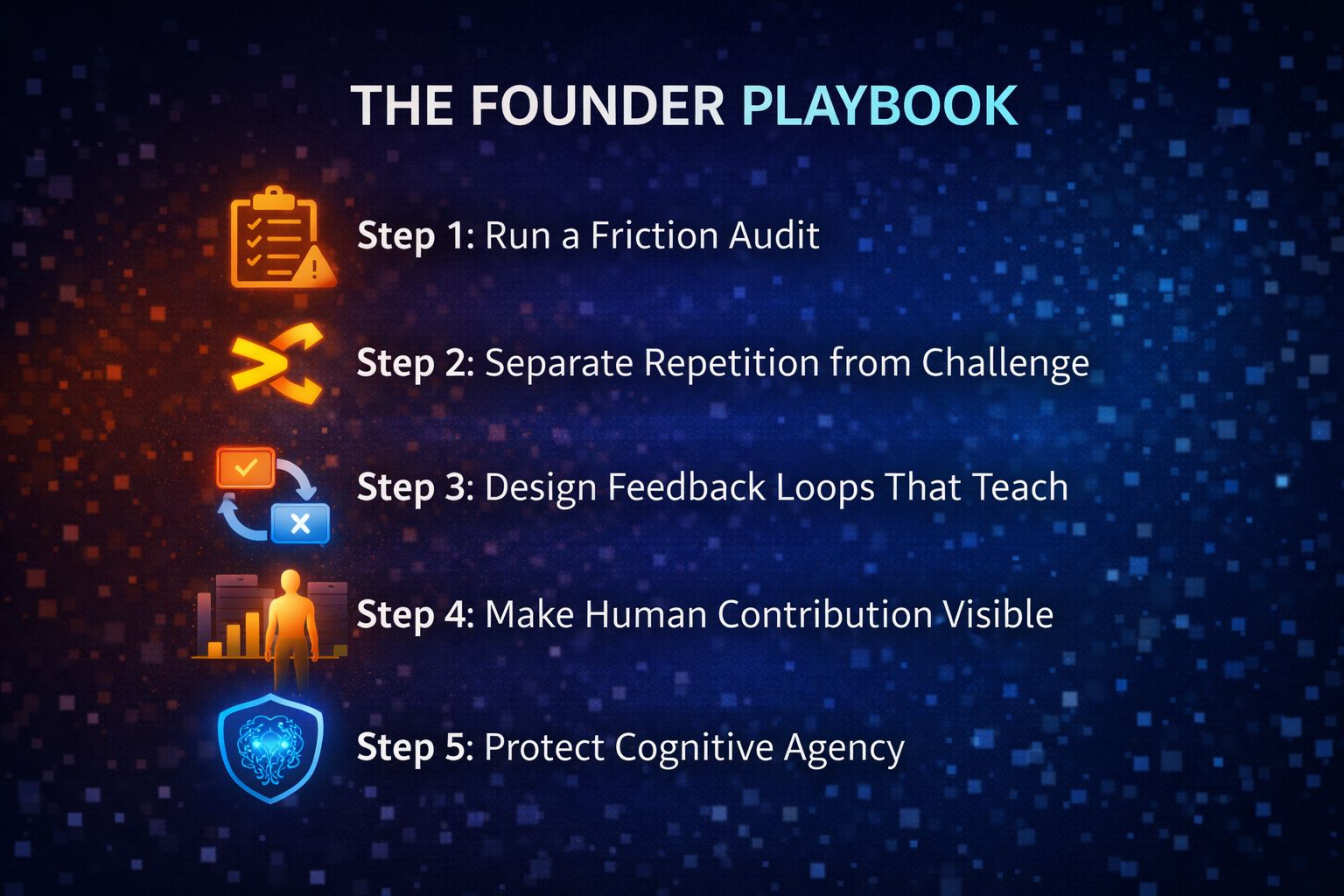

Step 1: Run a Friction Audit

Most teams assume friction equals waste. That assumption is dangerous.

Not all friction is inefficiency. Some friction is training. Some friction is identity formation.

For every workflow, ask:

Is this friction operational waste or developmental growth?

Does removing this eliminate learning cycles?

Does automation shrink psychological ownership?

Does this step build judgment, intuition, or mastery?

A useful mental model:

Waste friction: repetitive formatting, redundant coordination, mechanical data retrieval.

Growth friction: decision tension, creative iteration, strategic tradeoffs.

Automate waste.

Preserve growth.

If you remove every difficult step, you may also remove the very mechanism by which your team becomes exceptional.

Elite performance historically emerges from structured challenge, not seamless convenience.

Step 2: Separate Repetition from Challenge

Automation should target repetition.

Formatting

Data retrieval

Summarization

Boilerplate generation

Low-variance execution

These activities consume energy without expanding capability.

But challenge is different.

Strategic positioning

Complex tradeoffs

Ethical decisions

Creative framing

Systems design

Challenge builds capability. It compounds judgment.

If AI replaces challenge, skill growth plateaus.

The founder’s role is to ensure that automation removes cognitive drag without removing cognitive stretch.

A helpful test:

If this task disappeared entirely, would the team lose an opportunity to improve?

If yes, redesign it instead of eliminating it.

Step 3: Design Feedback Loops That Teach

Most AI systems today follow a simple model:

AI → Output

The human becomes an approver.

That is a fragile design pattern.

Instead, build structured collaboration:

AI → Suggestion

Human → Adjustment

System → Learn from adjustment

Every human override should be logged as:

A signal of nuance

A signal of values

A signal of contextual awareness

Over time, your system becomes more aligned with human judgment rather than merely optimized for statistical accuracy.

This creates two advantages:

Skill amplification for the user

Data defensibility for the company

The more your product learns from human refinement, the more difficult it becomes to replicate.

Step 4: Make Human Contribution Visible

One of the biggest risks in AI-native workflows is invisibility.

When AI generates the first draft, human effort becomes subtle.

Subtle effort often feels like no effort.

That perception erodes meaning.

Design visibility intentionally.

Build dashboards that show:

Human interventions per output

Improvements over AI baseline

Strategic decisions that changed outcomes

Error prevention moments

Make judgment measurable.

If humans can see their contribution, they experience growth.

If they cannot see it, they experience passivity.

Visibility reinforces identity. Identity reinforces engagement.

Step 5: Protect Cognitive Agency

Automation can quietly remove agency.

Recommendation engines shape decisions.

Auto-complete narrows thought patterns.

Predictive systems frame available options.

If users only approve what AI suggests, they slowly shift from decision-makers to validators.

That is not amplification. That is dependency.

Preserve final authority.

Humans must retain the ability to:

Approve

Override

Redirect

Redefine objectives

AI scales execution.

Humans scale values.

If AI begins shaping objectives instead of supporting them, you are no longer building a tool. You are building a governor.

Agency must remain human.

Meaning as Defensibility

We are entering a phase where companies function as adaptive systems.

They will:

Observe behavior in real time

Detect anomalies

Adjust workflows automatically

Recommend strategy shifts

Founders will spend less time assigning tasks and more time designing feedback architectures.

Leadership will increasingly mean:

Defining intent

Encoding values

Designing learning systems

Here is the strategic shift.

In a world where intelligence becomes abundant, identity becomes scarce.

When every competitor can:

Automate onboarding

Generate marketing

Build features faster

Optimize support

Differentiation moves elsewhere.

It moves to how your system makes people feel.

Does it make them:

More capable

More influential

More developed

Or does it make them passive observers of machine output?

Meaning is not a soft concept.

Meaning influences:

Retention

Innovation velocity

Brand loyalty

Cultural resilience

Companies that optimize only for output will scale quickly.

Companies that optimize for meaning will endure.

Key Takeaways for Builders

Not all friction is waste. Some friction builds mastery.

Automate repetition, not growth.

Design for collaboration, not replacement.

Make human judgment visible.

Protect cognitive agency.

Meaning is becoming infrastructure.

Bottom line

The emotional cost of automation probably won’t show up all at once.

There won’t be a clear moment when work suddenly feels empty. More likely, the shift will be gradual.

Fewer visible struggles.

Fewer hard-earned breakthroughs.

Fewer moments where effort clearly translates into growth.

AI will continue to make work easier. That’s the point.

But easier doesn’t automatically mean more fulfilling.

Historically, many of the experiences that drive professional growth involve some level of tension:

Learning something difficult

Solving a complex problem

Navigating ambiguity

Making a call without a clear answer

If automation removes all of that tension, we may unintentionally remove some of the mechanisms that build mastery.

This doesn’t mean we should resist automation. It means we should be more deliberate about how we design around it.

The companies that thrive in the AI era likely won’t be the ones that automate the most. They’ll be the ones that automate thoughtfully — removing waste while preserving challenge.

In a world where output becomes abundant, judgment becomes more visible.

And in a world where intelligence is increasingly accessible, how your systems shape identity may matter more than how fast they ship.

That may be the real shift underway.

—Naseema

Writer & Editor, the AIJ Newsletter

What’s the biggest hidden risk of AI automation?

That’s all for now. And, thanks for staying with us. If you have specific feedback, please let us know by leaving a comment or emailing us. We are here to serve you!

Join 130k+ AI and Data enthusiasts by subscribing to our LinkedIn page.

Become a sponsor of our next newsletter and connect with industry leaders and innovators.