👋 Hey friends, TGIF!

For most of the internet era, data centers were easy to ignore.

They sat in the background. Quiet, remote, and mostly invisible. If you were building a company, you focused on the obvious things: product, distribution, users, and growth.

You did not spend much time thinking about compute. It was cheap enough, available enough, and abstracted enough that it barely felt strategic. That was part of what made the software era so powerful. You could build something enormous without worrying too much about the physical infrastructure underneath it.

AI changes that.

Now, one of the most important questions in tech is no longer, what can you build? It is, how much compute can you access?

That sounds like a technical detail. It isn’t. It changes where power sits. It changes who can scale. It changes which industries start to matter again.

Because in the AI era, intelligence is no longer just software. It is infrastructure.

And once that clicks, data centers start to look very different. Not like boring utilities sitting in the background, but more like the new factories of the digital economy.

In today’s edition, we’ll explore:

why data centers are becoming one of the most strategic layers in the AI economy

how compute, chips, energy, and cooling are reshaping digital infrastructure

where the biggest startup opportunities are forming above and around the infrastructure layer

what builders should understand now if they want to build in this market

— Naseema Perveen

JOIN SMART NEWS BY TINY MEDIA

We’ve released a smart news platform that scores articles, research, and opinions in real time with relevance to your interests. You can get an overview, score rating, and a link to the full story with your interests and preferences at the centre of what you see.

Stop searching endless articles to find what you need. Let our smart news deliver to you automatically the stories you need to see for your career and to get more of your time back.

Sign up for a completely free account today!

IN PARTNERSHIP WITH MASTERWORKS

Someone just spent $236,000,000 on a painting. Here’s why it matters for your wallet.

Late last year, a Klimt sold for the highest price ever paid for modern art at auction.

An outlier sure, but it wasn't a fluke. U.S. auction sales grew 23.1% in 2025. The $1-5mm segment even grew 40.8% YoY.

Now, the S&P, teetering on all time highs, just posted its worst quarter since 2022, oil was up 94% (briefly), and Moody's puts recession odds at 48.6%.

Each environment is unique, but after dot-com, post war and contemporary art grew about 24% annually for a decade. After 2008, about 11% for 12 years.

It’s also had near-zero correlation with the S&P 500 since ‘95.*

Now, Masterworks lets you invest in shares of artworks featuring legends like Banksy, Basquiat, and Picasso.

$1.3 billion invested across over 500 artworks.

28 sales to date.

Net annualized returns on sold works held 12 months+ like 14.6%, 17.6%, and 17.8%.

Shares can sell quickly, but my subscribers can skip the waitlist:

*Investing involves risk. Past performance is not indicative of future returns. See important Reg A disclosures at masterworks.com/cd.

The Shift: From Software to Infrastructure

To understand why this matters, it helps to zoom out. The last two decades of tech were dominated by software.

That was the core story of the internet era.

Startups won by writing code, shipping quickly, and distributing products online. Once the product worked, it could scale to millions of users with relatively low marginal cost.

The hard part was rarely infrastructure.

It was usually:

building something people wanted

finding distribution

getting retention

standing out in crowded markets

Infrastructure existed, of course. But for most founders, it felt abstracted away.

Cloud providers handled the complexity. Servers were something you rented, not something you thought about strategically. Compute was important, but it was not usually the bottleneck.

AI changes that. Now, the product is no longer just software sitting on top of infrastructure. The product increasingly is the infrastructure.

Training and running modern AI systems requires a very different stack:

massive compute

specialized hardware

reliable energy

advanced cooling

physical capacity at scale

That reintroduces a constraint that software had spent years removing. For the first time in a long time, the physical layer matters again. And once the physical layer matters, the industry starts to look less like software and more like manufacturing.

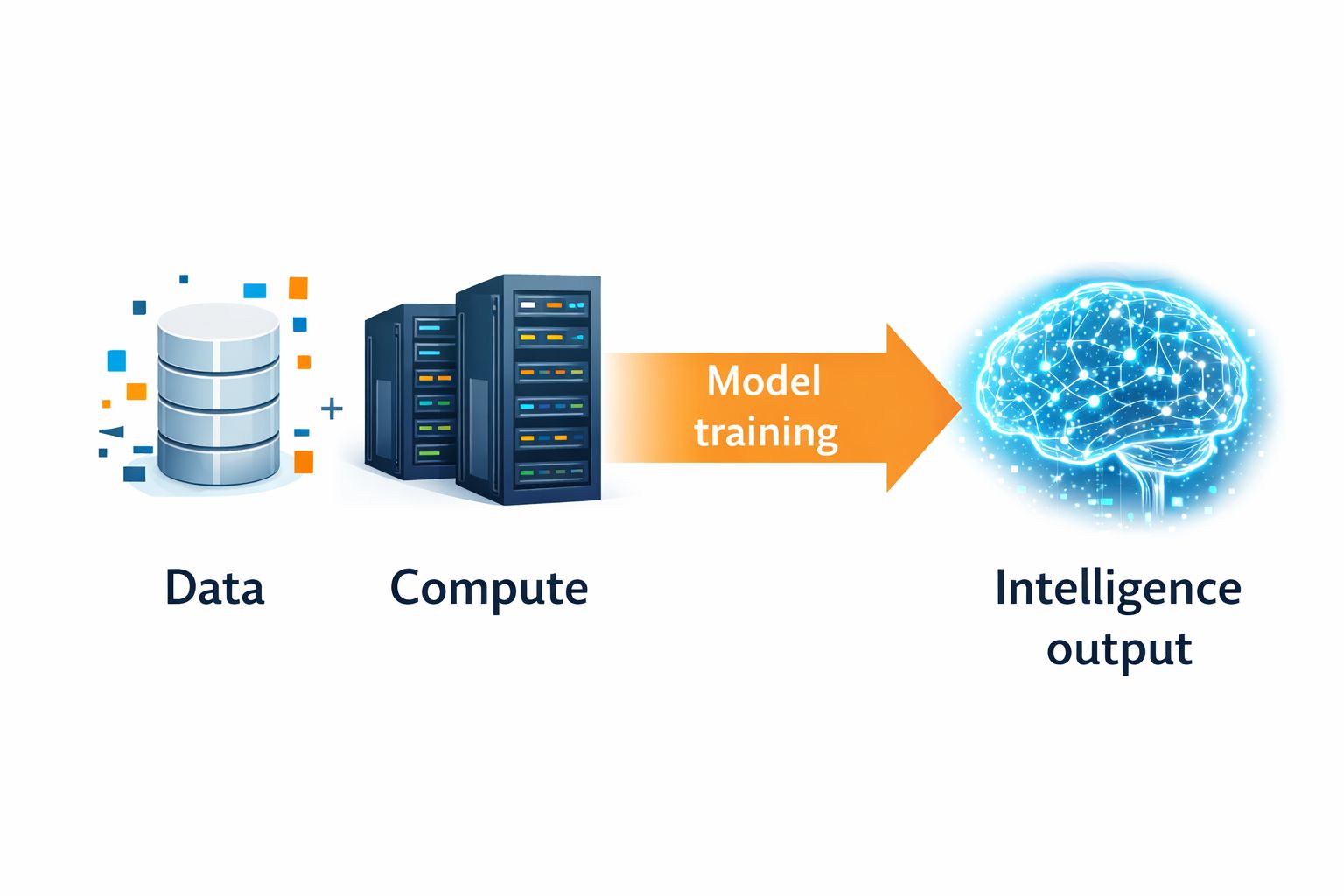

Data Centers as Factories

This is the mental model that makes the whole shift click.

A traditional factory takes:

Raw materials → Processing → Output

An AI data center does something very similar:

Data + Compute → Model training → Intelligence output

The outputs are different, but the structure is surprisingly familiar.

Instead of producing cars, textiles, or electronics, modern AI infrastructure produces:

predictions

recommendations

generated text

generated images

automated decisions

reasoning capacity

In other words, it produces intelligence at scale. That is why the “data centers as factories” analogy is so useful. Factories have always been about turning inputs into outputs efficiently.

So are AI data centers. They have the same kinds of pressures:

finite capacity

high input costs

throughput constraints

efficiency tradeoffs

utilization problems

optimization opportunities

A factory owner worries about machine uptime, energy use, and production efficiency.

An AI infrastructure operator worries about GPU availability, cooling efficiency, and inference cost.

Different machinery. Same logic.

That is also why this market is becoming strategic so quickly.

Once intelligence becomes an industrial output, whoever controls the production layer gains enormous leverage.

The New Bottleneck: Compute

For years, compute was treated like a utility.

You bought cloud credits.

You ran your software.

You moved on.

That mindset does not hold in the AI era. Today, compute is becoming a competitive advantage in its own right. The companies leading in AI are not just better at building models. They are better at securing the inputs required to run those models at scale.

That includes:

access to GPUs

access to data center capacity

access to energy

access to optimized hardware stacks

access to lower-latency infrastructure

This creates a new kind of moat. For most of the SaaS era, moats came from things like:

brand

product quality

user experience

network effects

distribution

Those still matter. But AI adds a new layer. Now, infrastructure control itself can become a source of advantage.

If two companies have similar models, the one with cheaper inference, better capacity, and more reliable infrastructure can move faster, serve more users, and protect margins more effectively.

That is a very different strategic environment. It means the winners may not just be the companies with the smartest software. They may be the ones with the strongest compute position.

Why This Is Happening Now

There are three major forces driving this shift.

1. AI workloads are fundamentally different

Traditional software workloads are relatively light.

A normal SaaS product serves web pages, moves data around, and runs business logic. It needs reliable infrastructure, but not usually enormous computational intensity.

AI workloads are different. Training a frontier model can require:

weeks or months of continuous computation

enormous power draw

highly optimized networking between chips

Even after training, inference at scale is expensive.

If millions of users are querying a model, every response has a real compute cost attached to it.

That changes the economics of the business.

In SaaS, the cost of serving one more user often felt negligible. In AI, one more user may carry a meaningful inference cost. That pushes compute from the background into the center of strategy.

It is no longer just an operating expense. It is a core business input.

2. Hardware is becoming specialized

The second force is hardware specialization. For a long time, general-purpose computing was good enough for most software companies.

Now it is not. AI requires specialized hardware such as:

GPUs

TPUs

AI accelerators

high-bandwidth memory systems

advanced networking fabrics

That makes infrastructure more strategic in two ways.

First, it becomes more expensive and harder to secure. Second, it creates performance differences that software alone cannot solve.

A company running on a generic stack may simply be at a disadvantage compared to one with optimized chips, networking, and inference infrastructure.

That is why the hardware-software boundary is suddenly interesting again. There is a growing opportunity for companies that help bridge that layer. Not everyone will build chips.

But many companies will build the orchestration, optimization, and tooling that makes specialized infrastructure usable.

That is where a lot of startup opportunity lives.

3. Energy becomes part of the equation

This may be the most underappreciated shift of all.

AI is making energy a tech problem again. Data centers consume enormous electricity even in the normal cloud era. AI accelerates that dramatically. As model training and inference scale, energy becomes a serious constraint.

This introduces questions that sound less like software and more like industrial planning:

Where should data centers be located?

How do you secure cheap, stable power?

How do you cool high-density compute efficiently?

How do you expand capacity without waiting years?

How do you optimize energy use per inference?

These are not abstract questions. They shape cost structures, expansion speed, and long-term competitiveness. Tech companies are now thinking like industrial operators.

That is a big mental shift. For years, software wanted to escape the constraints of the physical world. AI is dragging the industry back into it.

Where This Shows Up in the Real World

This shift is already visible if you know where to look.

Big Tech is vertically integrating

Companies like Microsoft, Google, and Amazon are investing aggressively in data center expansion, custom chips, network optimization, energy agreements, and regional infrastructure buildout.

That is not normal software-company behavior.

It is infrastructure-company behavior.

They are not just shipping AI features.

They are building the industrial backbone required to produce and deliver intelligence at scale.

That matters.

Because once the largest companies start vertically integrating around infrastructure, it signals that the bottleneck has moved.

The bottleneck is no longer just product.

It is production capacity.

NVIDIA becomes critical infrastructure

It is effectively a supplier of intelligence capacity.

That is a very different role.

In the old world, chip companies were important, but usually one layer removed from the strategic center of software.

In the AI world, NVIDIA sits much closer to the center.

Access to GPUs is now one of the most important inputs in the AI economy.

That makes NVIDIA look less like a component maker and more like a strategic infrastructure provider.

It also tells founders something important:

When the underlying production layer becomes scarce, value shifts downward into the stack.

That is exactly what is happening now.

New players are emerging

This is not just a Big Tech story.

A new wave of companies is forming around the infrastructure layer.

They are building things like:

modular data centers

liquid cooling systems

inference optimization platforms

energy-aware scheduling tools

compute marketplaces

AI infrastructure management layers

This is where the next wave of startup opportunity is forming.

Not every founder needs to build a foundation model.

There is enormous room to build the tools, coordination layers, and efficiency systems that make intelligence production cheaper, faster, and more reliable.

That is often where the best startup opportunities sit.

One layer below the hype.

The Economics of the New Factory

Once you see data centers as factories, the economics become easier to understand. And those economics matter a lot.

Input costs

Every AI “factory” depends on expensive inputs:

GPUs and servers

electricity

land and facilities

cooling systems

networking equipment

These costs are not marginal. They shape the economics of the entire business.

In many AI companies, infrastructure cost is becoming a defining strategic variable.

Throughput

How much compute can you push through the system in a given period?

This is the equivalent of factory output.

More throughput means:

more training runs

more inference capacity

more customers served

faster experimentation

Throughput directly affects growth.

Efficiency

How much useful output do you get per unit of energy and hardware?

This is one of the most important questions in the stack.

Because two companies might offer similar AI products, but the one with better efficiency will have:

lower costs

better margins

more pricing flexibility

more room to reinvest

Efficiency is not a side issue.

It is strategic.

Utilization

Factories are expensive when they sit idle.

So are data centers.

If expensive infrastructure is underutilized, profitability suffers quickly.

That means companies need to think carefully about:

workload balancing

capacity planning

peak usage patterns

idle resource management

This is where software meets industrial logic in a very direct way.

What’s Your Take? — Here’s Your Chance to Be Featured in the AI Journal

If data centers become the “factories” of the AI economy, where do you see the biggest opportunity for new startups?

We’d love to hear your perspective.

Email your thoughts to: [email protected]

Selected responses will be featured in next week’s edition.

The Builder Opportunity

Most founders are not going to build GPUs.

They are not going to pour concrete, negotiate power contracts, or design next-generation cooling systems from scratch.

Because the biggest startup opportunities in this market may not sit at the bottom of the stack. They sit above and around the infrastructure layer.

This is a familiar pattern in tech.

In every major platform shift, a small number of companies own the deepest layer of infrastructure. The bigger startup wave usually happens one or two layers above that, where complexity is still high, demand is rising, and incumbents have left a lot of workflow pain unresolved.

That is exactly what is happening here.

AI infrastructure is becoming more expensive, more specialized, and more operationally complex. That creates openings for founders who can make the system easier to use, cheaper to run, and smarter to coordinate.

There are four especially interesting categories.

1. Compute orchestration

As compute gets more expensive, allocation starts to matter a lot more.

That sounds obvious, but it has real product implications.

A few years ago, most companies could afford to be relatively sloppy with infrastructure. Cloud bills mattered, but they rarely determined whether a business model worked. In AI, that changes quickly. Poor workload allocation, idle GPUs, or inefficient scheduling can destroy margins.

That creates demand for a new class of tools.

Companies need better ways to:

allocate compute resources dynamically

schedule workloads based on urgency and cost

shift tasks across available capacity

reduce idle infrastructure

optimize usage across different model types and environments

The closest analogy is what Kubernetes and cloud orchestration tools did for software infrastructure.

Before those tools, companies were managing servers manually or with brittle scripts. Cloud made infrastructure programmable. Orchestration made it manageable at scale.

AI now needs its own version of that shift.

There is room for products that answer questions like:

Which jobs should run where?

Which workloads can tolerate latency?

When should you use high-end GPUs versus cheaper alternatives?

How do you prioritize inference traffic versus model training?

How do you avoid paying premium prices for capacity you do not actually need?

This is not glamorous. It is incredibly valuable.

As AI spreads across products, the companies that can coordinate compute best will often outperform the ones with the flashiest models.

2. Efficiency software

This may be the most underrated category in the entire AI stack.

A lot of founders still assume the real value sits in bigger models. But in practice, small efficiency gains can create huge economic advantages.

If you can reduce inference cost by 15%, that matters.

If you can cut latency by 20%, that matters.

If you can compress workloads enough to use cheaper infrastructure, that really matters.

Why? Because AI margins are often infrastructure margins.

That creates a strong market for efficiency software, including tools for:

model optimization

inference routing

caching

quantization

workload compression

cost-aware model selection

Think about the economics here.

A company serving millions of requests per day does not need a dramatic breakthrough to unlock value. It just needs a better way to run what already exists.

That’s what makes this category so attractive.

The startup doesn’t need to beat frontier labs at model quality. It needs to help customers produce the same outcome with fewer resources.

And that is often a much more practical wedge.

In many infrastructure markets, the most valuable companies are not the ones that invent the deepest layer. They are the ones that make the whole system more efficient.

This could be one of those markets.

3. Energy optimization

For a long time, energy was someone else’s problem.

That is no longer true.

As AI scales, energy stops being a background utility and becomes a front-line constraint. Data center economics are increasingly shaped by electricity availability, cooling requirements, and power efficiency.

That opens up a major software and systems opportunity.

Companies will need tools that help them:

optimize energy usage across workloads

predict demand spikes

manage cooling more efficiently

shift compute to lower-cost windows or locations

reduce waste across the infrastructure stack

This category matters because it touches both cost and feasibility.

As AI workloads grow, some infrastructure decisions will increasingly come down to energy logic, not just software logic. Where should a job run? When should it run? Which environments are most efficient? How do you balance throughput against power draw?

That means energy optimization could become a core part of AI infrastructure design.

And that creates startup opportunities at multiple layers:

monitoring and analytics

scheduling and routing

cooling optimization

energy-aware infrastructure software

hybrid compute planning across regions

Founders often underestimate how big this can get.

In a world where intelligence production is energy-intensive, anything that improves power efficiency becomes a strategic lever.

4. Developer abstraction layers

Most developers do not want to think about racks, cooling systems, cluster scheduling, or GPU utilization curves.

They want to build products.

That creates one of the most familiar and powerful opportunities in tech: abstraction.

Whenever infrastructure gets more complex, a new platform layer usually emerges to hide that complexity.

Cloud computing did this for servers. Stripe did this for payments. Twilio did it for communications. In each case, the winning companies made a hard infrastructure problem feel simple to developers.

AI infrastructure needs the same thing.

There is room for developer-first platforms that:

simplify access to compute

abstract away routing and provisioning complexity

offer clean APIs for inference and training

manage infrastructure decisions in the background

let teams focus on building use cases instead of managing hardware logic

This is where entirely new platform companies can emerge.

And importantly, this layer can compound.

Once a platform becomes the default interface between developers and compute, it gains distribution, workflow control, and potentially very attractive economics.

The winners here will not just provide access.

They will provide clarity.

They will make AI infrastructure feel usable in the same way cloud made servers usable.

That is a massive opportunity.

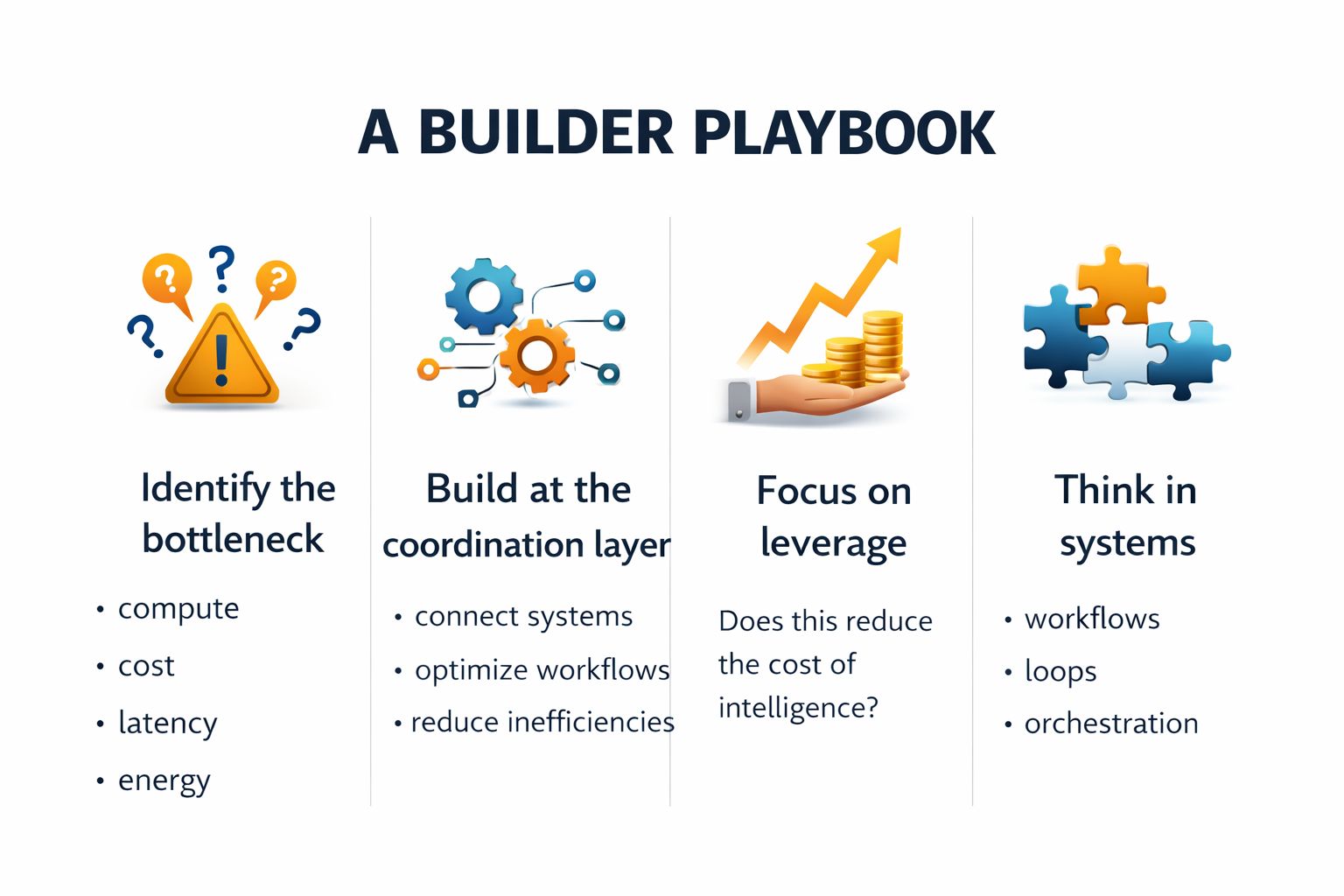

A Builder Playbook

If you’re exploring this space, here’s a practical way to think about it.

Not every founder needs a moonshot thesis. Sometimes it’s enough to find one painful constraint and solve it better than anyone else.

Step 1: Identify the bottleneck

Start with the constraint, not the technology.

Where exactly is the pain?

Is it:

compute access?

cost?

latency?

energy availability?

provisioning complexity?

poor utilization?

The best infrastructure companies are usually built around bottlenecks, not features.

This is especially true in AI.

A lot of founders will be tempted to start with a general pitch like “we’re building infrastructure for AI.” That’s too broad.

Instead, get specific.

Whose problem are you solving?

frontier labs?

startups serving inference-heavy products?

enterprises trying to manage internal AI workloads?

cloud providers trying to optimize usage?

data center operators trying to reduce waste?

The narrower and more painful the bottleneck, the better the wedge.

Step 2: Build at the coordination layer

The biggest value often comes from connecting systems, not replacing them.

This is one of the most important lessons in infrastructure markets.

You usually do not win by asking the market to throw away everything it already uses. You win by helping existing systems work together better.

That means looking for ways to:

orchestrate existing infrastructure

coordinate workloads across providers

reduce workflow inefficiencies

unify fragmented tooling

improve visibility into cost and performance

In many cases, the opportunity is not “build a better data center.”

It is “help companies use the data centers, chips, and models they already depend on much more intelligently.”

That’s a more realistic and often more scalable startup path.

Step 3: Focus on leverage

A simple question helps here:

Does this product reduce the cost of intelligence?

If yes, you’re probably in an interesting market.

That cost can be reduced in several ways:

lower inference cost

better hardware utilization

reduced latency

improved energy efficiency

faster deployment cycles

fewer wasted resources

The key is leverage.

Infrastructure startups are most powerful when they improve the economics of the entire layer beneath them.

If your product makes every AI workload a little cheaper, faster, or easier to run, that is real leverage.

And leverage compounds.

Step 4: Think in systems, not features

This is not a market where a single feature wins.

It’s a systems market.

The strongest products here will sit inside critical loops:

provisioning

orchestration

monitoring

optimization

feedback

That means founders need to think less like app builders and more like systems designers.

Ask:

Where does this product live in the workflow?

Does it become more valuable as usage grows?

Does it improve decision-making across multiple layers?

Can it evolve from tool to operating layer?

The best infrastructure companies are rarely point solutions forever. They expand by becoming more central to how the system operates.

That should be the ambition.

The Risks

This shift creates real opportunity, but it also comes with real constraints.

Capital intensity

Building at the hardware layer is expensive.

That means some parts of this market will be inaccessible to smaller startups. Not every company can afford to compete on physical infrastructure.

That’s why the layers above infrastructure are so important.

Concentration risk

A small number of players currently control a large portion of the stack:

chips

cloud infrastructure

major model providers

hyperscale data center networks

That creates dependency and platform risk.

Startups need to be careful about building businesses that are too exposed to one provider’s pricing, policy, or roadmap.

Energy constraints

This may become the biggest long-term limit.

Scaling AI requires a lot of power. If energy supply, cooling capacity, or regulatory constraints tighten, growth could be shaped by physical limits much more than most software founders expect.

That makes this market both exciting and difficult.

The Future

Over the next decade, data centers will likely become:

larger

denser

more specialized

more energy-aware

more strategically located

They will behave less like generic IT assets and more like industrial systems powering the digital economy.

And the companies competing in this market will increasingly be judged by one thing:

How efficiently can they produce intelligence?

That is the new production question.

Not just:

How good is your model?

But:

How economically can you run it?

How reliably can you scale it?

How intelligently can you allocate the underlying infrastructure?

That is why this category matters so much.

It is not just about supporting AI.

It is about defining the economics of AI.

The Takeaway

For years, software felt detached from the physical world.

AI is reconnecting the two.

The companies that win in this next era won’t just build better models.

They’ll build better infrastructure. Or they’ll build the orchestration, optimization, and abstraction layers that make that infrastructure dramatically more useful.

Because in the AI economy:

Compute is the new capital.

Data centers are the new factories.

And intelligence is the new output.

That is the shift.

And for builders, that is where the opportunity starts.

—Naseema

Writer & Editor, AIJ

Before You Go

Stay ahead of where AI and technology are actually heading, not just where headlines point:

→ Read more insights on The AI Journal and download our 2026 Media Kit.

→ See all our reports and guides, which you can download for free today.

→ Join Premium for exclusive takes on topics emerging and stories developing in AI.

→ Explore broader tech coverage on Silicon Valley Journal.

What do you think will be the biggest bottleneck in the AI economy?

That’s all for now. And, thanks for staying with us. If you have specific feedback, please let us know by leaving a comment or emailing us. We are here to serve you!

Join 130k+ AI and Data enthusiasts by subscribing to our LinkedIn page.

Become a sponsor of our next newsletter and connect with industry leaders and innovators.